Artificial Intelligence Tutorial

Last updated on 13th Sep 2020, Artificial Intelligence, Blog, Tutorials

Artificial Intelligence is a way of making a computer, a computer-controlled robot, or a software think intelligently, in the similar manner the intelligent humans think.

AI is accomplished by studying how the human brain thinks, and how humans learn, decide, and work while trying to solve a problem, and then using the outcomes of this study as a basis of developing intelligent software and systems.

Goals of AI

- To Create Expert Systems − The systems which exhibit intelligent behavior, learn, demonstrate, explain, and advise its users.

- To Implement Human Intelligence in Machines − Creating systems that understand, think, learn, and behave like humans.

Programming Without and With AI

The programming without and with AI is different in following ways −

| Programming without AI | Programming with AI |

|---|---|

| A computer program without AI can answer the specific questions it is meant to solve. | A computer program with AI can answer the generic questions it is meant to solve. |

| Modification in the program leads to change in its structure. | AI programs can absorb new modifications by putting highly independent pieces of information together. Hence you can modify even a minute piece of information about the program without affecting its structure. |

| Modification is not quick and easy. It may lead to affecting the program adversely. | Quick and Easy program modification. |

Applications of AI

- Gaming

- Natural Language Processing

- Expert Systems

- Vision Systems

- Speech Recognition

- Handwriting Recognition

- Intelligent Robots

Types of Artificial Intelligence

There are two types of Artificial Intelligence –

- Weak AI

- Strong AI

| Weak AI | Strong AI |

|---|---|

| Narrow application, scope is very limited | Widely applied, scope is vast |

| Good at specific tasks | Incredible human- level intelligence |

| Uses supervised and unsupervised learning. | Uses clustering and association to process data. |

| Eg. Siri, Alexa | Ex. Robotics, Automation. |

Growth of AI

Ever wondered how AI is so popular and the growth is exponentially increasing. The major reason for these include-

- Decreasing cost of computational powers

- Availability of Data

- Better Algorithms

Subscribe For Free Demo

Error: Contact form not found.

Subsets of Artificial Intelligence

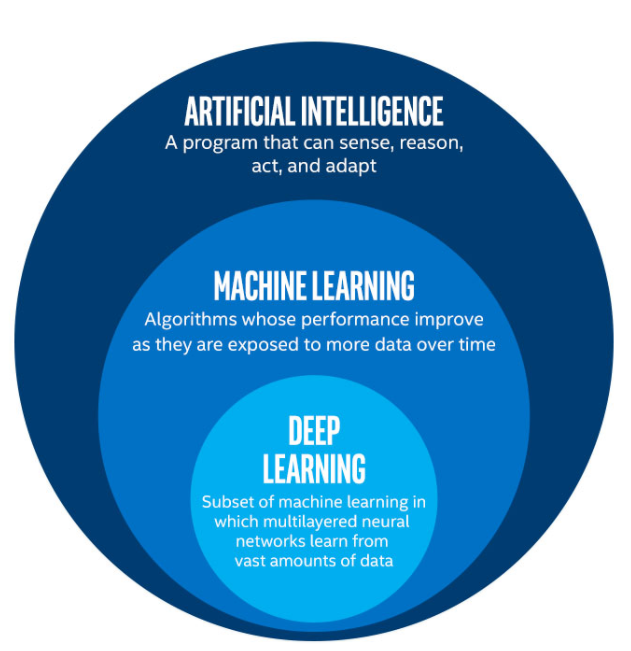

Artificial Intelligence is an umbrella term. There are two subsets of Artificial Intelligence: Machine Learning and Deep Learning.

Machine Learning

Machine learning is a branch of artificial intelligence in which a program or machine uses a set of algorithms to find patterns in the dataset(s). Above all, we don’t have to write individual instructions for every action. As machine learning models capture more and more data, they become smarter and self-improving.

Further, Machine Learning can be sub-categorized into three subsets:

- Supervised Machine Learning

- Unsupervised Machine Learning

- Reinforcement Learning

Applications of Machine Learning

- Amazon Recommendation System

- Machine Learning used in Fraud Detection

- Machine Learning used in Social Media

Deep Learning

Further development of machine learning has led to a different sub-category, i.e., Deep Learning. Deep Learning makes use of artificial neural networks that consist of layers of networks working on different parameters to give the desired output.

Applications of Deep Learning

- Predicting Earthquakes

- Adding sounds to silent movies

- Netflix Recommendation System

What Is An Artificial Neural Network?

ANN is a non-linear model that is widely used in Machine Learning and has a promising future in the field of Artificial Intelligence.

Artificial Neural Network is analogous to a biological neural network. A biological neural network is a structure of billions of interconnected neurons in a human brain. The human brain comprises neurons that send information to various parts of the body in response to an action performed.

Similar to this, an Artificial Neural Network (ANN) is a computational network in science that resembles the characteristics of a human brain. ANN can model as the original neurons of the human brain, hence ANN processing parts are called Artificial Neurons.

ANN consist of a large number of interconnected neurons that are inspired by the working of a brain. These neurons have the capabilities to learn, generalize the training data and derive results from complicated data.

These networks are used in the areas of classification & prediction, pattern & trend identifications, optimization problems, etc. ANN learns from the training data (input and target output known) without any programming.

The learned neural network is called an expert system with the capability to analyze information and answer the questions of a specific field.

The formal definition of ANN given by Dr.Robert Hecht-Nielson, inventor of one first neuro computers is:

“…a computing system made up of a number of simple, highly interconnected processing elements, which process information by their dynamic state response to external inputs”.

Comparison Of Biological Neuron And Artificial Neuron

| Biological Neuron | Artificial Neuron |

|---|---|

| It is made of cells. | Cells correspond to neurons. |

| It has dendrites which are interconnections between the cell body | The connection weights corresponds to dendrites. |

| Soma receives input | Soma similar to net input weight |

| The axon receives the signal | The output of ANN corresponds to axon |

Characteristics Of ANN

- Non Linearity: The mechanism followed in ANN for the generation of the input signal is nonlinear.

- Supervised Learning: The input and output are mapped and the ANN is trained with the training dataset.

- Unsupervised Learning: The target output is not given, so the ANN will learn on its own by discovering the features in the input patterns.

- Adaptive Nature: The connection weights in the nodes of ANN are capable to adjust themselves to give the desired output.

- Biological Neuron Analogy: The ANN has a human brain-inspired structure and functionality.

- Fault Tolerance: These networks are highly tolerant as the information is distributed in layers and computation occurs in real-time.

Structure Of ANN

Artificial Neural Networks are processing elements either in the form of algorithms or hardware devices modeled after the neuronal structure of a human brain cerebral cortex.

These networks are also simply called Neural Networks. The NN is formed of many layers. The multiple layers that are interconnected are often called “Multilayer Perceptron”. The neurons in one layer are called “Nodes”. These nodes have an “Activation function”.

The ANN has 3 main layers:

- Input Layer: The input patterns are fed to the input layers. There is one input layer.

- Hidden Layers: There can be one or more hidden layers. The processing that takes place in the inner layers is called “hidden layers”. The hidden layers calculate the output based on the “weights” which is the “sum of weighted synapse connections”. The hidden layers refine the input by removing redundant information and send the information to the next hidden layer for further processing.

- Output Layer: This hidden layer connects to the “output layer” where the output is shown.

Activation Function

The activation function is an internal state of a neuron. It is a function of input that the neuron receives. The activation function is used to convert the input signal on the node of ANN to an output signal.

Practical Applications for Artificial Neural Networks (ANNs)

Artificial neural networks are paving the way for life-changing applications to be developed for use in all sectors of the economy. Artificial intelligence platforms that are built on ANNs are disrupting the traditional ways of doing things. From translating web pages into other languages to having a virtual assistant order groceries online to conversing with chatbots to solve problems, AI platforms are simplifying transactions and making services accessible to all at negligible costs.

Artificial neural networks have been applied in all areas of operations.

- Email service providers use ANNs to detect and delete spam from a user’s inbox;

- Asset managers use it to forecast the direction of a company’s stock;

- Credit rating firms use it to improve their credit scoring methods;

- E-commerce platforms use it to personalize recommendations to their audience; chatbots are developed with ANNs for natural language processing;

- Deep learning algorithms use ANN to predict the likelihood of an event;

The list of ANN incorporation goes on across multiple sectors, industries, and countries.

Types of Artificial Neural Networks

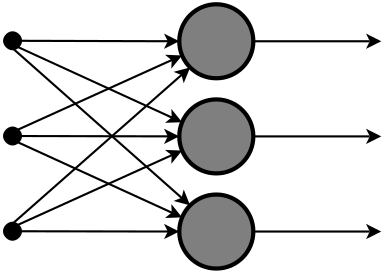

Feedforward Neural Network – Artificial Neuron:

This neural network is one of the simplest forms of ANN, where the data or the input travels in one direction. The data passes through the input nodes and exit on the output nodes. This neural network may or may not have the hidden layers. In simple words, it has a front propagated wave and no backpropagation by using a classifying activation function usually.

Below is a Single layer feed-forward network. Here, the sum of the products of inputs and weights are calculated and fed to the output. The output is considered if it is above a certain value i.e threshold(usually 0) and the neuron fires with an activated output (usually 1) and if it does not fire, the deactivated value is emitted (usually -1).

Application of Feedforward neural networks are found in computer vision and speech recognition where classifying the target classes is complicated. These kind of Neural Networks are responsive to noisy data and easy to maintain. This paper explains the usage of Feed Forward Neural Network. The X-Ray image fusion is a process of overlaying two or more images based on the edges. Here is a visual description.

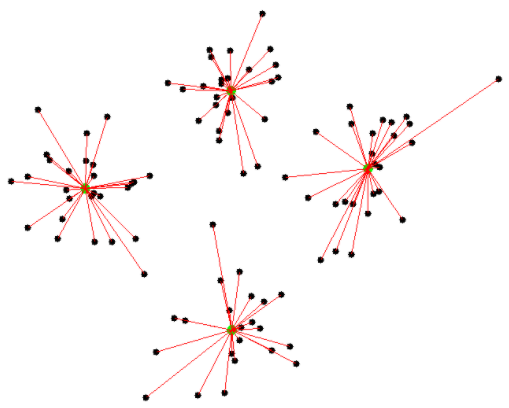

Radial basis function Neural Network:

Radial basic functions consider the distance of a point with respect to the center. RBF functions have two layers, first where the features are combined with the Radial Basis Function in the inner layer and then the output of these features are taken into consideration while computing the same output in the next time-step which is basically a memory.

Below is a diagram that represents the distance calculating from the center to a point in the plane similar to a radius of the circle. Here, the distance measure used in euclidean, other distance measures can also be used. The model depends on the maximum reach or the radius of the circle in classifying the points into different categories. If the point is in or around the radius, the likelihood of the new point begin classified into that class is high. There can be a transition while changing from one region to another and this can be controlled by the beta function.

This neural network has been applied in Power Restoration Systems. Power systems have increased in size and complexity. Both factors increase the risk of major power outages. After a blackout, power needs to be restored as quickly and reliably as possible.

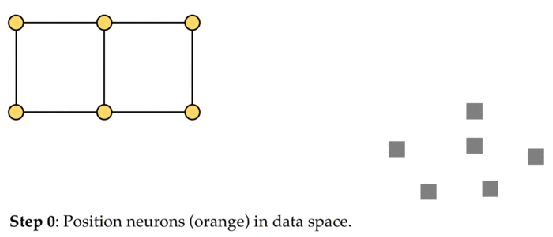

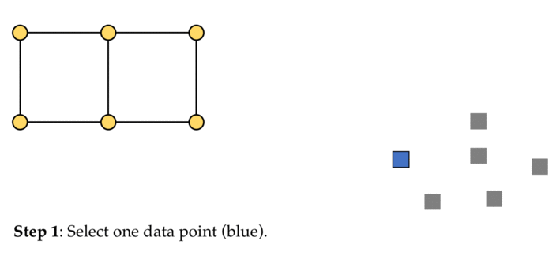

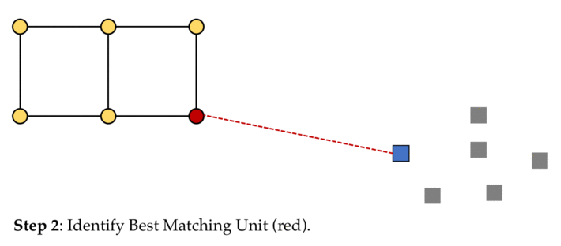

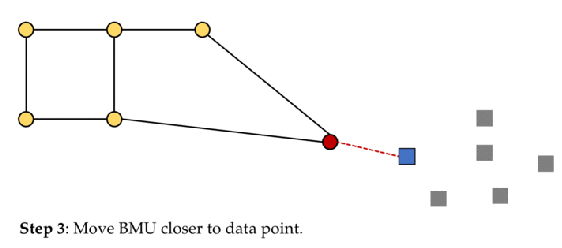

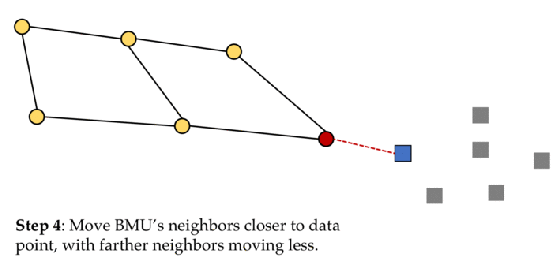

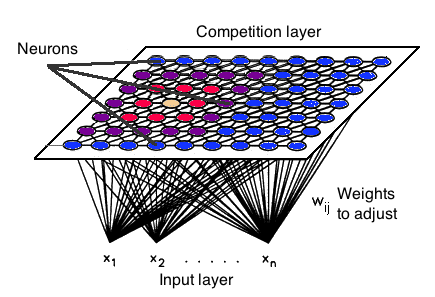

Kohonen Self Organizing Neural Network:

The objective of a Kohonen map is to input vectors of arbitrary dimension to a discrete map composed of neurons. The map needs to be trained to create its own organization of the training data. It comprises either one or two dimensions. When training the map the location of the neuron remains constant but the weights differ depending on the value. This self-organization process has different parts, in the first phase, every neuron value is initialized with a small weight and the input vector.

In the second phase, the neuron closest to the point is the ‘winning neuron’ and the neurons connected to the winning neuron will also move towards the point like in the graphic below. The distance between the point and the neurons is calculated by the euclidean distance, the neuron with the least distance wins. Through the iterations, all the points are clustered and each neuron represents each kind of cluster. This is the gist behind the organization of the Kohonen Neural Network.

Kohonen Neural Network is used to recognize patterns in the data. Its application can be found in medical analysis to cluster data into different categories. The Kohonen map was able to classify patients having glomerular or tubular with a high accuracy.

Recurrent Neural Network(RNN) – Long Short Term Memory:

The Recurrent Neural Network works on the principle of saving the output of a layer and feeding this back to the input to help in predicting the outcome of the layer.

Here, the first layer is formed similar to the feed forward neural network with the product of the sum of the weights and the features. The recurrent neural network process starts once this is computed, this means that from one time step to the next each neuron will remember some information it had in the previous time-step.

This makes each neuron act like a memory cell in performing computations. In this process, we need to let the neural network to work on the front propagation and remember what information it needs for later use. Here, if the prediction is wrong we use the learning rate or error correction to make small changes so that it will gradually work towards making the right prediction during the back propagation.

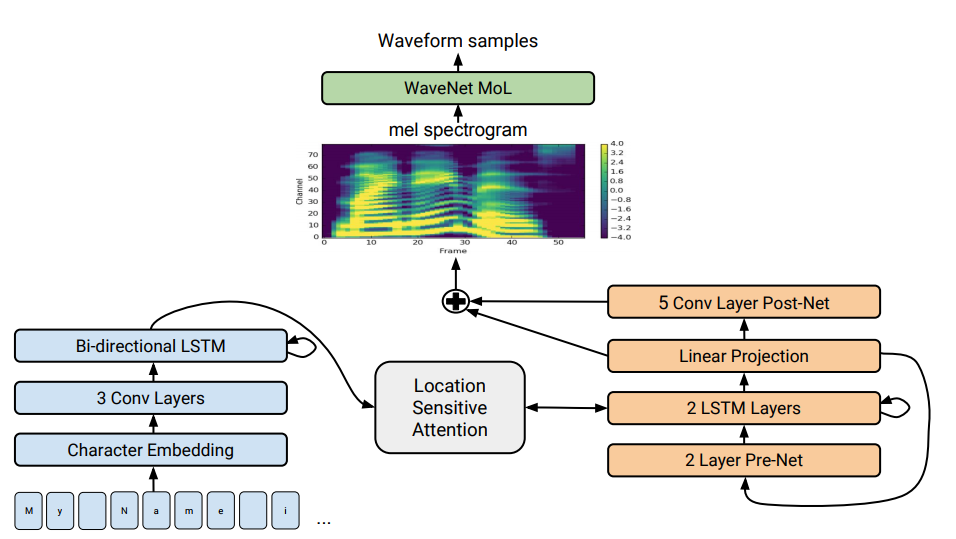

The application of Recurrent Neural Networks can be found in text to speech(TTS) conversion models. Deep Voice, which was developed at Baidu Artificial Intelligence Lab in California. It was inspired by traditional text-to-speech structure replacing all the components with neural networks. First, the text is converted to ‘phoneme’ and an audio synthesis model converts it into speech. RNN is also implemented in Tacotron 2: Human-like speech from text conversion. An insight about it can be seen below,

Convolutional Neural Network:

Convolutional neural networks are similar to feed forward neural networks, where the neurons have learnable weights and biases. Its application has been in signal and image processing which takes over OpenCV in the field of computer vision.

ConvNet is applied in techniques like signal processing and image classification techniques. Computer vision techniques are dominated by convolutional neural networks because of their accuracy in image classification. The technique of image analysis and recognition, where the agriculture and weather features are extracted from the open-source satellites like LSAT to predict the future growth and yield of a particular land are being implemented.

6. Modular Neural Network:

Modular Neural Networks have a collection of different networks working independently and contributing towards the output. Each neural network has a set of inputs that are unique compared to other networks constructing and performing sub-tasks. These networks do not interact or signal each other in accomplishing the tasks.

The advantage of a modular neural network is that it breakdowns a large computational process into smaller components decreasing the complexity. This breakdown will help in decreasing the number of connections and negates the interaction of these networks with each other, which in turn will increase the computation speed. However, the processing time will depend on the number of neurons and their involvement in computing the results.

Below is a visual representation,

Modular Neural Networks (MNNs) is a rapidly growing field in artificial Neural Networks research. This paper surveys the different motivations for creating MNNs: biological, psychological, hardware, and computational. Then, the general stages of MNN design are outlined and surveyed as well, viz., task decomposition techniques, learning schemes and multi-module decision-making strategies.

5 Top Careers in Artificial Intelligence

1. Data Analytics

With data at the heart of AI and machine learning functions, those who have been trained to properly manage that data have many opportunities for success in the industry. Though data science is a broad field, the role that data analysts play in these AI processes is one of the most significant.

Responsibilities: Data analysts need to have a solid understanding of the data itself—including the practices of managing, analyzing, and storing it—as well as the skills needed to effectively communicate findings through visualization.

Education & Training: Those looking to excel in the field of analytics as it relates to AI and machine learning should consider either a master’s degree in analytics or a master’s degree in computer science with a specialization in data science.

2. User Experience

User experience (UX) roles involve working with products—including those which incorporate AI—to ensure that consumers understand their function and can easily use them. The increased use of AI in technology today has led to a growing need for UX specialists who are trained in this particular area.

Responsibilities: In general, user experience specialists are in charge of understanding how humans use equipment, and thus how computer scientists can apply that understanding to the production of more advanced software. In terms of AI, a UX specialist’s responsibilities may include understanding how humans are interacting with these tools in order to develop functionality that better fits those humans’ needs down the line.

Education & Training: Earning a master’s degree in computer science can be beneficial for those looking to pursue a career in user experience for technology.

Natural Language Processing

Many of the most popular consumer applications of AI today revolve around language. From chatbots to virtual assistants to predictive texting on smartphones, AI tools have been used to replicate human speech in a variety of formats. To do this effectively, developers call upon the knowledge of natural language processors—individuals who have both the language and technology skills needed to assist in the creation of these tools.

Responsibilities: As there are many applications of natural language processing, the responsibilities of the experts in this field will vary. However, in general, individuals in these roles will use their complex understanding of both language and technology to develop systems through which computers can successfully communicate with humans.

Education & Training: Those hoping to pursue a career in natural language processing should consider a master’s degree in computer science with a specialization in human-computer interface.

4. Computer Science & Artificial Intelligence Research

Although many of these top careers explore the application or function of AI technology, computer science and artificial intelligence research is more about discovering ways to advance the technology itself.

Responsibilities: A computer science and artificial intelligence researcher’s responsibilities will vary greatly depending on their specialization or their particular role in the research field. Some may be in charge of advancing the data systems related to AI. Others might oversee the development of new software that can uncover new potential in the field. Others still may be responsible for overseeing the ethics and accountability that comes with the creation of such tools. No matter their specialization, however, individuals in these roles will work to uncover the possibilities of these technologies and then help implement changes in existing tools to reach that potential.

Education & Training: Due to the extensive need for a background in not only applied statistics but also mathematical theory, many of these research roles require a master’s or P.h.D in computer science.

5. Software Engineering

The AI field also relies on traditional computer science roles such as software engineers to develop the programs on which artificial intelligence tools function.

Responsibilities: Software engineers are part of the overall design and development process of digital programs or systems. In the scope of AI, individuals in these roles are responsible for developing the technical functionality of the products which utilize machine learning to carry out a variety of tasks.

Education & Training: Individuals hoping to achieve a successful career in software engineering should have a master’s degree in computer science. Those looking to then design software specifically for AI should also consider programs with a concentration in artificial intelligence.

TOP 10 COURSES AND CERTIFICATIONS IN ARTIFICIAL INTELLIGENCE

CFTE’s online AI in Finance course

Artificial intelligence in Finance is an online course created by CFTE and Ngee Ann Polytechnic for experts to comprehend the utilizations of Artificial Intelligence and Machine Learning in financial services. The course pursues a comparable configuration to CFTE’s Fintech Foundation Course.

Through the span of 18 modules and 4 sections, over 20+ finance and technology thought leaders and industry insiders will talk about key AI topics, for example, Natural Language Processing (NLP), data science, recommendation engines, artificial neural networks (ANN) and more. It’s a learning-based course so it’s the ideal platform for those starting their adventure to understanding AI in financial services.

Artificial Intelligence Executive Certification

This course is made for people who are keen to learn about techniques and strategies of artificial intelligence to take care of business issues. After the essential themes are understood you will go over how AI is affecting various industries just as the different tools that are engaged with the operations for creating efficient solutions. By the end of the program, you will have various methodologies added that can be utilized to improve the performance of your company.

Udacity Machine Learning Nanodegree

It has two three-month programs that enable you to ace the abilities important to turn into an effective Machine Learning Engineer. It’s unquestionably one of the more career-centered programs and like the Stanford course, covers the core ML principles and furthermore plunges deeper into the domain of predictive modelling. It’s beginner-focused however, anticipate an enthusiastic test. A one-to-one technical mentor is accessible. Likewise reasonable for those on a financial budget, as access is charged on a month to month basis, making it conceivably less expensive if you can finish the course quicker.

Deep Learning by Andrew Ng

If you need to kick off a profession in AI, at that point this specialization will enable you to accomplish that. Through this variety of 5 courses, you will learn the fundamental points of Deep Learning, see how to construct neural networks, and lead fruitful ML projects. Alongside this, there are chances to work on case studies from different real-world businesses. The practical assignments will enable you to rehearse the concepts in Python and in Tensorflow. Furthermore, there are discussions from top pioneers in the field that will give you inspiration and help you to comprehend the situations in this profession.

International Faculty of Finance, IFF – Artificial Intelligence in Banking Training Course

A two-day training program that spreads Artificial Intelligence in banking by analyzing current leading practices over the worldwide business. It offers an in-depth look into the best accessible practices and how they can be applied in any company. The course offers detailed knowledge into how AI can best suit your expanding business through 10 thorough levels. Additionally, this course is appropriate for those who are short of time.

Artificial Intelligence Certification by Columbia University

Join up this certification to pick up mastery in one of the fastest developing areas of computer science through a progression of lectures and assignments. The classes will assist you in getting a strong comprehension of the core principles of artificial intelligence. With an equivalent accentuation on practical and theory, these exercises will instruct you to manage real-world issues and think of appropriate AI solutions. With this certification in your pack, it is sheltered to state that you will have a high ground at job interviews and other opportunities.

MIT – Artificial Intelligence: Implications for Business Strategy

MIT partners with e-learning stage GetSmarter to address the developing interest among business experts to get a comprehension of what precisely artificial intelligence is and how it will affect business. This online AI course is for all intents and purposes centered and follows a comparative pattern to the MIT Fintech Certificate in which students were first given a prologue to the subject and then given a capstone project to apply their comprehension.

Introduction to Artificial Intelligence by IBM

Offered by IBM, this introductory course will help you learn the basics of artificial intelligence. With this course, you will realize what AI is and how it is utilized in the software or application development industry. During the course, you will be presented to different issues and worries that encompass artificial intelligence like morals and bias, and jobs. Subsequent to finishing the course, you will likewise exhibit AI in real life with a smaller than usual project that is intended to test your insight into AI. In addition, in the wake of completing the project, you will likewise get your certificate of completion from Udacity.

Open Badge Programme, IBM Skills Gateway

Students on the Open Badge Program approach a variety of AI and ML short courses (or badges) that have been intended to enable you to comprehend the nuts and bolts of AI and ML. As it’s IBM, the courses turn towards acquainting students with their environment of AI products, for example, IBM Watson and IBM Cloud. Courses are aimed for enterprise developers with a scholarly foundation in computer science.