What You'll Learn

Learn to manage and analyze big data using HDFS, MapReduce, and core Hadoop tools.

Build real-world data pipelines and workflows with hands-on Hadoop project experience.

Master Big Data and Hadoop Course in Bangalore to learn data storage, processing, and retrieval techniques using the Hadoop ecosystem.

Work with Hive, Pig, and Sqoop to perform efficient data queries and integrations.

Gain expertise in handling large datasets through a practical Big data and Hadoop Training in Bangalore.

Understand job scheduling and resource management using YARN in a cluster environment.

Big data and Hadoop Training Objectives

- Hadoop skills are in demand – this can be a simple fact! thence, there's a pressing want for IT professionals to stay themselves in trend with Hadoop and Big data technologies.

- Apache Hadoop provides you with the means that to increase your career and offers you the subsequent advantages: Accelerated career growth.

- These days organizations want Hadoop directors to require care of large Hadoop clusters. high firms like Facebook, eBay, Twitter, etc are using Hadoop.

- The professionals with Hadoop skills are a unit in vast demand. in keeping with Payscale, the typical pay for Hadoop directors is $121k.

- Since the duty entails learning knowledge, the titles knowledge Scientists, knowledge Engineers, knowledge Analysts, are the duty titles offered to a licensed Hadoop Developer.

- Jobs are a lot of in-demand across the planet a study by the puts a calculable shortage of a hundred ninety,000 data scientists within the North American nation alone.

- If curious about numbers, do the mathematics and calculate the world figures.

- Big data is one every of the fast and most promising fields, considering all the technologies proposed within the IT market these days.

- To require advantage of those opportunities, you would like structured training with the newest information as per current trade requirements and best practices.

- Planning to learn Hadoop could be a smart call if you're operating within the data Technology business.

- There are not any specific conditions to start out learning the framework.

- But, it's recommended to understand the fundamentals of Java and the UNIX system if you wish to become a Hadoop professional and choose a career in it.

- Before trying a Hadoop course, a competitor is usually recommended to own basic data of programming languages like Python, Scala, Java and a far better understanding of SQL and RDBMS.

- Hadoop needs data of many programming languages, count on the role you wish it to satisfy. For example, R or Python are relevant for analysis, whereas Java is additionally relevant for development work.

- On every of the foremost necessary motivating reasons to learn big data Hadoop is that the undeniable fact that it brings an array of opportunities to bolster your career to a new level.

- As additional and additional firms address big data, they're more and more searching for experts Who will interpret and use knowledge.

- Software Developers, Project Managers

- Software Architects

- ETL and knowledge deposition Professionals

- Data Engineers

- Data Analysts & Business Intelligence Professionals

- DBAs and decibel professionals

- Big data engineers will anticipate a nine.3 p.c boost in beginning pay in 2015, with average salaries starting from $119,250 to $168,250."

- The typical pay for a Hadoop Developer in the city, CA, is $139,000.

- A Senior Hadoop developer in the city, CA will earn over $178,000 on average.

Request more informations

WhatsApp (For Call & Chat):

+91 89259 58912

Big data and Hadoop Course Benefits

The Big data and Hadoop Certification Course in Bangalore offers practical skills in big data processing, real-time analytics, and large-scale data management. With expert-led training, hands-on projects, and industry-relevant tools, you'll gain the confidence to handle complex data challenges. This course boosts your job readiness, enhances your technical portfolio, and opens up opportunities in top companies through dedicated placement support.

- Designation

-

Annual SalaryHiring Companies

Request more informations

WhatsApp (For Call & Chat):

+91 89259 58912

About Your Big data and Hadoop Certification Training

Our Big data and Hadoop Training Institute in Bangalore provides an affordable, in-depth learning path covering Hadoop fundamentals, HDFS, MapReduce, Hive, and more. With 500+ hiring partners, Big data and Hadoop Course With Placement offer strong placement support and real-world Big data and Hadoop Projects in Bangalore experience. This Hadoop Training ensures you gain practical, job-ready skills to excel in the big data industry and secure high-growth career opportunities.

Top Skills You Will Gain

- Data Processing

- Cluster Management

- Data Storage

- Distributed Computing

- MapReduce Programming

- Data Integration

- Performance Tuning

- Fault Tolerance

12+ Big data and Hadoop Tools

Online Classroom Batches Preferred

No Interest Financing start at ₹ 5000 / month

Corporate Training

- Customized Learning

- Enterprise Grade Learning Management System (LMS)

- 24x7 Support

- Enterprise Grade Reporting

Not Just Studying

We’re Doing Much More!

Empowering Learning Through Real Experiences and Innovation

Big data and Hadoop Course Curriculam

Trainers Profile

At LearnoVita, our trainers for the Big Data and Hadoop Internship in Bangalore are seasoned professionals with over 10+ years of hands-on experience working in top MNCs. They bring deep expertise in real-time Hadoop applications, making learning both practical and career-focused. Our trainers are available for online sessions with 24/7 live support, ensuring continuous guidance and mentorship. The Big data and Hadoop Training in Bangalore includes recorded classes, live demos, and comprehensive study materials to strengthen conceptual understanding. With a strong emphasis on job-oriented skills, our instructors are dedicated to helping students achieve successful placements in the Big Data ecosystem.

Syllabus of Big data and Hadoop Course Download syllabus

- High Availability

- Scaling

- Advantages and Challenges

- What is Big data

- Big Data opportunities,Challenges

- CharLearnoVitaristics of Big data

- Hadoop Distributed File System

- Comparing Hadoop & SQL

- Industries using Hadoop

- Data Locality

- Hadoop Architecture

- Map Reduce & HDFS

- Using the Hadoop single node image (Clone)

- HDFS Design & Concepts

- Blocks, Name nodes and Data nodes

- HDFS High-Availability and HDFS Federation

- Hadoop DFS The Command-Line Interface

- Basic File System Operations

- Anatomy of File Read,File Write

- Block Placement Policy and Modes

- More detailed explanation about Configuration files

- Metadata, FS image, Edit log, Secondary Name Node and Safe Mode

- How to add New Data Node dynamically,decommission a Data Node dynamically (Without stopping cluster)

- FSCK Utility. (Block report)

- How to override default configuration at system level and Programming level

- HDFS Federation

- ZOOKEEPER Leader Election Algorithm

- Exercise and small use case on HDFS

- Map Reduce Functional Programming Basics

- Map and Reduce Basics

- How Map Reduce Works

- Anatomy of a Map Reduce Job Run

- Legacy Architecture ->Job Submission, Job Initialization, Task Assignment, Task Execution, Progress and Status Updates

- Job Completion, Failures

- Shuffling and Sorting

- Splits, Record reader, Partition, Types of partitions & Combiner

- Optimization Techniques -> Speculative Execution, JVM Reuse and No. Slots

- Types of Schedulers and Counters

- Comparisons between Old and New API at code and Architecture Level

- Getting the data from RDBMS into HDFS using Custom data types

- Distributed Cache and Hadoop Streaming (Python, Ruby and R)

- YARN

- Sequential Files and Map Files

- Enabling Compression Codec’s

- Map side Join with distributed Cache

- Types of I/O Formats: Multiple outputs, NLINEinputformat

- Handling small files using CombineFileInputFormat

- Hands on “Word Count” in Map Reduce in standalone and Pseudo distribution Mode

- Sorting files using Hadoop Configuration API discussion

- Emulating “grep” for searching inside a file in Hadoop

- DBInput Format

- Job Dependency API discussion

- Input Format API discussion,Split API discussion

- Custom Data type creation in Hadoop

- ACID in RDBMS and BASE in NoSQL

- CAP Theorem and Types of Consistency

- Types of NoSQL Databases in detail

- Columnar Databases in Detail (HBASE and CASSANDRA)

- TTL, Bloom Filters and Compensation

- HBase Installation, Concepts

- HBase Data Model and Comparison between RDBMS and NOSQL

- Master & Region Servers

- HBase Operations (DDL and DML) through Shell and Programming and HBase Architecture

- Catalog Tables

- Block Cache and sharding

- SPLITS

- DATA Modeling (Sequential, Salted, Promoted and Random Keys)

- Java API’s and Rest Interface

- Client Side Buffering and Process 1 million records using Client side Buffering

- HBase Counters

- Enabling Replication and HBase RAW Scans

- HBase Filters

- Bulk Loading and Co processors (Endpoints and Observers with programs)

- Real world use case consisting of HDFS,MR and HBASE

- Hive Installation, Introduction and Architecture

- Hive Services, Hive Shell, Hive Server and Hive Web Interface (HWI)

- Meta store, Hive QL

- OLTP vs. OLAP

- Working with Tables

- Primitive data types and complex data types

- Working with Partitions

- User Defined Functions

- Hive Bucketed Tables and Sampling

- External partitioned tables, Map the data to the partition in the table, Writing the output of one query to another table, Multiple inserts

- Dynamic Partition

- Differences between ORDER BY, DISTRIBUTE BY and SORT BY

- Bucketing and Sorted Bucketing with Dynamic partition

- RC File

- INDEXES and VIEWS

- MAPSIDE JOINS

- Compression on hive tables and Migrating Hive tables

- Dynamic substation of Hive and Different ways of running Hive

- How to enable Update in HIVE

- Log Analysis on Hive

- Access HBASE tables using Hive

- Hands on Exercises

- Pig Installation

- Execution Types

- Grunt Shell

- Pig Latin

- Data Processing

- Schema on read

- Primitive data types and complex data types

- Tuple schema, BAG Schema and MAP Schema

- Loading and Storing

- Filtering, Grouping and Joining

- Debugging commands (Illustrate and Explain)

- Validations,Type casting in PIG

- Working with Functions

- User Defined Functions

- Types of JOINS in pig and Replicated Join in detail

- SPLITS and Multiquery execution

- Error Handling, FLATTEN and ORDER BY

- Parameter Substitution

- Nested For Each

- User Defined Functions, Dynamic Invokers and Macros

- How to access HBASE using PIG, Load and Write JSON DATA using PIG

- Piggy Bank

- Hands on Exercises

- Sqoop Installation

- Import Data.(Full table, Only Subset, Target Directory, protecting Password, file format other than CSV, Compressing, Control Parallelism, All tables Import)

- Incremental Import(Import only New data, Last Imported data, storing Password in Metastore, Sharing Metastore between Sqoop Clients)

- Free Form Query Import

- Export data to RDBMS,HIVE and HBASE

- Hands on Exercises

- HCatalog Installation

- Introduction to HCatalog

- About Hcatalog with PIG,HIVE and MR

- Hands on Exercises

- Flume Installation

- Introduction to Flume

- Flume Agents: Sources, Channels and Sinks

- Log User information using Java program in to HDFS using LOG4J and Avro Source, Tail Source

- Log User information using Java program in to HBASE using LOG4J and Avro Source, Tail Source

- Flume Commands

- Use case of Flume: Flume the data from twitter in to HDFS and HBASE. Do some analysis using HIVE and PIG

- HUE.(Hortonworks and Cloudera)

- Workflow (Action, Start, Action, End, Kill, Join and Fork), Schedulers, Coordinators and Bundles.,to show how to schedule Sqoop Job, Hive, MR and PIG

- Real world Use case which will find the top websites used by users of certain ages and will be scheduled to run for every one hour

- Zoo Keeper

- HBASE Integration with HIVE and PIG

- Phoenix

- Proof of concept (POC)

- Spark Overview

- Linking with Spark, Initializing Spark

- Using the Shell

- Resilient Distributed Datasets (RDDs)

- Parallelized Collections

- External Datasets

- RDD Operations

- Basics, Passing Functions to Spark

- Working with Key-Value Pairs

- Transformations

- Actions

- RDD Persistence

- Which Storage Level to Choose?

- Removing Data

- Shared Variables

- Broadcast Variables

- Accumulators

- Deploying to a Cluster

- Unit Testing

- Migrating from pre-1.0 Versions of Spark

- Where to Go from Here

Request more informations

WhatsApp (For Call & Chat):

+91 89259 58912

Industry Projects

Exam & Big data and Hadoop Certification

At LearnoVita, You Can Enroll in Either the instructor-led Hadoop Online Course, Classroom Training or Online Self-Paced Training.

Hadoop Online Training / Class Room:

- Participate and Complete One batch of Hadoop Training Course

- Successful completion and evaluation of any one of the given projects

Hadoop Online Self-learning:

- Complete 85% of the Hadoop Certification Training

- Successful completion and evaluation of any one of the given projects

These are the Different Kinds of Certification levels that was Structured under the Cloudera Hadoop Certification Path.

- Cloudera Certified Professional - Data Scientist (CCP DS)

- Cloudera Certified Administrator for Hadoop (CCAH)

- Cloudera Certified Hadoop Developer (CCDH)

- Learn About the Certification Paths.

- Write Code Daily This will help you develop Coding Reading and Writing ability.

- Refer and Read Recommended Books Depending on Which Exam you are Going to Take up.

- Join LernoVita Hadoop Certification Training in Bangalore That Gives you a High Chance to interact with your Subject Expert Instructors and fellow Aspirants Preparing for Certifications.

- Solve Sample Tests that would help you to Increase the Speed needed for attempting the exam and also helps for Agile Thinking.

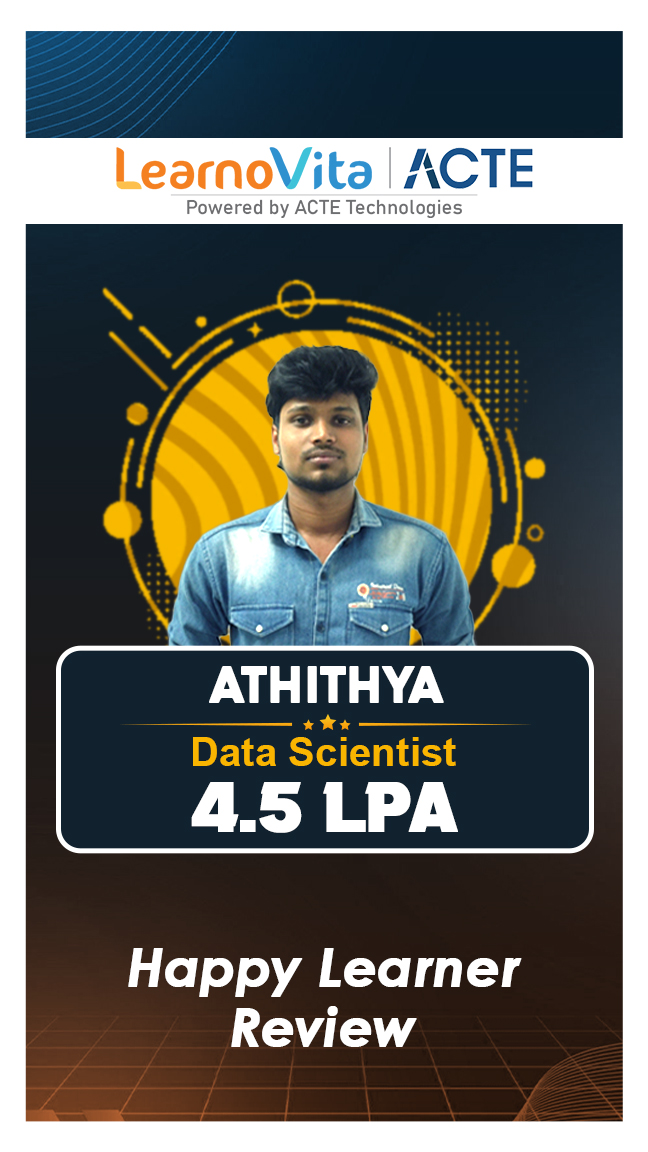

Our learners

transformed their careers

A majority of our alumni

fast-tracked into managerial careers.

Get inspired by their progress in the Career Growth Report.

Our Student Successful Story

How are the Big data and Hadoop Course with LearnoVita Different?

Feature

LearnoVita

Other Institutes

Affordable Fees

Competitive Pricing With Flexible Payment Options.

Higher Big data and Hadoop Fees With Limited Payment Options.

Live Class From ( Industry Expert)

Well Experienced Trainer From a Relevant Field With Practical Hadoop Training

Theoretical Class With Limited Practical

Updated Syllabus

Updated and Industry-relevant Big data and Hadoop Course Curriculum With Hands-on Learning.

Outdated Curriculum With Limited Practical Training.

Hands-on projects

Real-world Big data and Hadoop Projects With Live Case Studies and Collaboration With Companies.

Basic Projects With Limited Real-world Application.

Certification

Industry-recognized Big data and Hadoop Certifications With Global Validity.

Basic Big data and Hadoop Certifications With Limited Recognition.

Placement Support

Strong Placement Support With Tie-ups With Top Companies and

Basic Placement Support

Industry Partnerships

Strong Ties With Top Tech Companies for Internships and Placements

No Partnerships, Limited Opportunities

Batch Size

Small Batch Sizes for Personalized Attention.

Large Batch Sizes With Limited Individual Focus.

Additional Features

Lifetime Access to Big data and Hadoop Course Materials, Alumni Network, and Hackathons.

No Additional Features or Perks.

Training Support

Dedicated Mentors, 24/7 Doubt Resolution, and Personalized Guidance.

Limited Mentor Support and No After-hours Assistance.

Big data and Course FAQ's

- LearnoVita is dedicated to assisting job seekers in seeking, connecting, and achieving success, while also ensuring employers are delighted with the ideal candidates.

- Upon successful completion of a career course with LearnoVita, you may qualify for job placement assistance. We offer 100% placement assistance and maintain strong relationships with over 650 top MNCs.

- Our Placement Cell aids students in securing interviews with major companies such as Oracle, HP, Wipro, Accenture, Google, IBM, Tech Mahindra, Amazon, CTS, TCS, Sports One , Infosys, MindTree, and MPhasis, among others.

- LearnoVita has a legendary reputation for placing students, as evidenced by our Placed Students' List on our website. Last year alone, over 5400 students were placed in India and globally.

- We conduct development sessions, including mock interviews and presentation skills training, to prepare students for challenging interview situations with confidence. With an 85% placement record, our Placement Cell continues to support you until you secure a position with a better MNC.

- Please visit your student's portal for free access to job openings, study materials, videos, recorded sections, and top MNC interview questions.

- Build a Powerful Resume for Career Success

- Get Trainer Tips to Clear Interviews

- Practice with Experts: Mock Interviews for Success

- Crack Interviews & Land Your Dream Job

Global Quality Training

At The Lowest Fees & Expert Trainer

Need custom pricing?

Fees Starts From

Fees Starts From

Regular 1:1 Mentorship From Industry Experts

Regular 1:1 Mentorship From Industry Experts