- File formats in Hadoop Tutorial | A Concise Tutorial Just An Hour

- Controlling Hadoop Jobs Using Oozie Tutorial | The Complete Guide

- Apache Spark Streaming Tutorial | Best Guide For Beginners

- What is Elasticsearch | Tutorial for Beginners

- Amazon Kinesis : Process & Analyze Streaming Data | The Ultimate Student Guide

- Apache Camel Tutorial – EIP, Routes, Components | Ultimate Guide to Learn [BEST & NEW]

- Apache NiFi (Cloudera DataFlow) | Become an expert with Free Online Tutorial

- Kafka Tutorial : Learn Kafka Configuration

- Apache Sqoop Tutorial

- Spark And RDD Cheat Sheet Tutorial

- Apache Pig Tutorial

- Talend

- Cassandra Tutorial

- Kafka Tutorial

- HBase Tutorial

- Spark Java Tutorial

- ELK Stack Tutorial

- Netbeans Tutorial

- PySpark MLlib Tutorial

- Spark RDD Optimization Techniques Tutorial

- Apache Spark & Scala Tutorial

- Apache Impala Tutorial

- Apache Oozie: A Concise Tutorial Just An Hour | LearnoVita

- Apache Storm Advanced Concepts Tutorial

- Apache Storm Tutorial

- Hadoop Mapreduce tutorial

- Hive cheat sheet

- Spark Algorithm Tutorial

- Apache Spark Tutorial

- Apache Cassandra Data Model Tutorial

- Big Data Applications Tutorial

- Advanced Hive Concepts and Data File Partitioning Tutorial

- Hadoop Architecture Tutorial

- Big Data and Hadoop Ecosystem Tutorial

- Apache Mahout Tutorial

- Hadoop Tutorial

- BIG DATA Tutorial

- File formats in Hadoop Tutorial | A Concise Tutorial Just An Hour

- Controlling Hadoop Jobs Using Oozie Tutorial | The Complete Guide

- Apache Spark Streaming Tutorial | Best Guide For Beginners

- What is Elasticsearch | Tutorial for Beginners

- Amazon Kinesis : Process & Analyze Streaming Data | The Ultimate Student Guide

- Apache Camel Tutorial – EIP, Routes, Components | Ultimate Guide to Learn [BEST & NEW]

- Apache NiFi (Cloudera DataFlow) | Become an expert with Free Online Tutorial

- Kafka Tutorial : Learn Kafka Configuration

- Apache Sqoop Tutorial

- Spark And RDD Cheat Sheet Tutorial

- Apache Pig Tutorial

- Talend

- Cassandra Tutorial

- Kafka Tutorial

- HBase Tutorial

- Spark Java Tutorial

- ELK Stack Tutorial

- Netbeans Tutorial

- PySpark MLlib Tutorial

- Spark RDD Optimization Techniques Tutorial

- Apache Spark & Scala Tutorial

- Apache Impala Tutorial

- Apache Oozie: A Concise Tutorial Just An Hour | LearnoVita

- Apache Storm Advanced Concepts Tutorial

- Apache Storm Tutorial

- Hadoop Mapreduce tutorial

- Hive cheat sheet

- Spark Algorithm Tutorial

- Apache Spark Tutorial

- Apache Cassandra Data Model Tutorial

- Big Data Applications Tutorial

- Advanced Hive Concepts and Data File Partitioning Tutorial

- Hadoop Architecture Tutorial

- Big Data and Hadoop Ecosystem Tutorial

- Apache Mahout Tutorial

- Hadoop Tutorial

- BIG DATA Tutorial

Big Data and Hadoop Ecosystem Tutorial

Last updated on 29th Sep 2020, Big Data, Blog, Tutorials

To most people, Big Data is a baffling tech term. If you mention Big Data, you could well be subjected to questions such as Is it a tool, or a product? Or Is Big Data only for big businesses? and many more such questions.

So, what is Big Data?

Today, the size or volume, complexity or variety, and the rate of growth or velocity of the data which organizations handle have reached such unbelievable levels that traditional processing and analytical tools fail to process.

Big Data is ever growing and cannot be determined concerning its size. What was considered as Big eight years ago, is no longer considered so.

For example Nokia, the telecom giant migrated to Hadoop to analyze 100 Terabytes of structured data and more than 500 Terabytes of semi-structured data.

The Hadoop Distributed File System data warehouse stored all the multi-structured data and processed data at a petabyte scale.

According to The Big Data Market report the Big Data market is expected to grow from USD 28.65 Billion in 2016 to USD 66.79 Billion by 2021.

Big data refers to all the data generated through various platforms across the world..

Categories of big data:

- 1. Structured

- 2. Unstructured

- 3.Semi-structured

Subscribe For Free Demo

Error: Contact form not found.

Examples of Big Data:

1) New York Exchange generates about 1TB of new trade data per day.

2) Social Media: Statistics shows that 500+ terabytes of data get ingested into the database of the social media site Facebook every day.

Data is mainly generated in terms of:

- Photos and video uploads

- Message exchanges

- Comments

3) Jet Engine /Travel Portals:

A ingle jet engine generates 10+ terabytes (TB) of data in 30 minutes of flight per day. The generation of data reaches up to many petabytes (PB).

What Is Hadoop?

Hadoop is an open source framework managed by the Apache Software Foundation. Open source implies that it is freely available and its source code can be changed as per the user’s requirements. Apache Hadoop is designed to store and process big data efficiently. Hadoop is used for data storing, processing, analyzing, accessing, governance, operations, and security.

Large organizations with a huge amount of data use Hadoop, processed with the help of a large cluster of commodity hardware. Cluster refers to a group of systems which are connected via LAN and multiple nodes on this cluster help in performing Hadoop jobs. Hadoop has gained popularity worldwide in managing big data and, at present, it has a nearly 90% market share.

Features of Hadoop

- Cost Effective: Hadoop system is very cost effective as it does not require any specialized hardware and thus requires low investment. Use of simple hardware known as commodity hardware is sufficient for the system.

- Supports Large Cluster of Nodes: A Hadoop structure can be made of thousands of nodes making a large cluster. Large cluster helps in expanding the storage system & offers more computing power.

- Parallel Processing of Data: Hadoop system supports parallel processing of the data across all nodes in the cluster, and thus it reduces the storage & processing time.

- Distribution of Data(Distributed Processing): Hadoop efficiently distributes the data across all the nodes in a cluster. Moreover, it replicates the data over the entire cluster in order to retrieve the data other nodes, if a particular node is busy or fails to operate.

- Automatic Failover Management (Fault Tolerance): An important feature of Hadoop is that it automatically resolves the problem in case a node in the cluster fails. The framework itself replaces the failed system with another system along with configuring the replicated settings and data on the new machine.

- Supports Heterogeneous Clusters: A heterogeneous cluster is one which accounts for nodes or machines which are from a different vendor, different operating system, and running on different versions. For instance, if a Hadoop cluster has three systems, one Lenovo machine that runs on RHEL Linux, the second is Intel machine running on Ubuntu Linux, and third is an AMD machine running on Fedora Linux, all of these different systems are capable of simultaneously running on a single cluster.

- Scalability: A Hadoop system has the ability to add or remove node/nodes and hardware components from a cluster, without affecting the operations of the cluster. This refers to scalability, which is one of the important features of the Hadoop system.

The demand for Big data Hadoop training courses has increased after Hadoop made a special showing in various enterprises for big data management in a big way.Big data hadoop training course that deals with the implementation of various industry use cases is necessary Understand how the hadoop ecosystem works to master Apache Hadoop skills and gain in-depth knowledge of big data ecosystem and hadoop architecture.However, before you enroll for any big data hadoop training course it is necessary to get some basic idea on how the hadoop ecosystem works.Learn about the various hadoop components that constitute the Apache Hadoop architecture in this article.

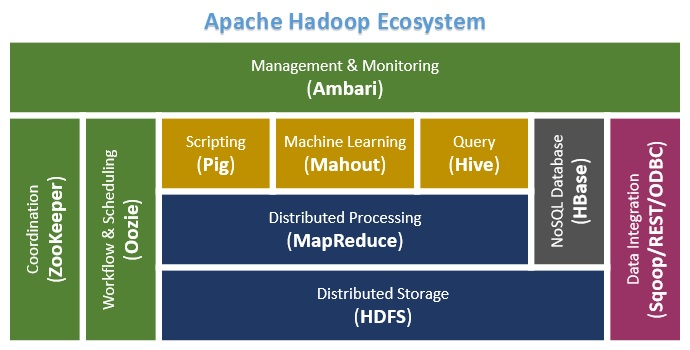

All the components of the Hadoop ecosystem, as explicit entities are evident. The holistic view of Hadoop architecture gives prominence to Hadoop common, Hadoop YARN, Hadoop Distributed File Systems (HDFS) and Hadoop MapReduce of the Hadoop Ecosystem. Hadoop common provides all Java libraries, utilities, OS level abstraction, necessary Java files and script to run Hadoop, while Hadoop YARN is a framework for job scheduling and cluster resource management. HDFS in Hadoop architecture provides high throughput access to application data and Hadoop MapReduce provides YARN based parallel processing of large data sets.

In our earlier articles, we have defined “What is Apache Hadoop” .To recap, Apache Hadoop is a distributed computing open source framework for storing and processing huge unstructured datasets distributed across different clusters. The basic principle of working behind Apache Hadoop is to break up unstructured data and distribute it into many parts for concurrent data analysis. Big data applications using Apache Hadoop continue to run even if any of the individual cluster or server fails owing to the robust and stable nature of Hadoop.

With big data being used extensively to leverage analytics for gaining meaningful insights, Apache Hadoop is the solution for processing big data. Apache Hadoop architecture consists of various hadoop components and an amalgamation of different technologies that provides immense capabilities in solving complex business problems.

All the components of the Hadoop ecosystem, as explicit entities are evident. The holistic view of Hadoop architecture gives prominence to Hadoop common, Hadoop YARN, Hadoop Distributed File Systems (HDFS) and Hadoop MapReduce of Hadoop Ecosystem. Hadoop common provides all java libraries, utilities, OS level abstraction, necessary java files and script to run Hadoop, while Hadoop YARN is a framework for job scheduling and cluster resource management. HDFS in Hadoop architecture provides high throughput access to application data and Hadoop MapReduce provides YARN based parallel processing of large data sets.

Let us deep dive into the Hadoop architecture and its components to build right solutions to a given business problems.

Defining Architecture Components of the Big Data Ecosystem

Core Hadoop Components

The Hadoop Ecosystem comprises of 4 core components –

1) Hadoop Common-

Apache Foundation has pre-defined set of utilities and libraries that can be used by other modules within the Hadoop ecosystem. For example, if HBase and Hive want to access HDFS they need to make of Java archives (JAR files) that are stored in Hadoop Common.

2) Hadoop Distributed File System (HDFS) –

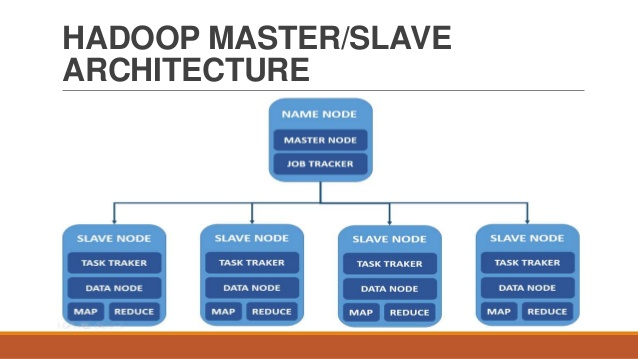

The default big data storage layer for Apache Hadoop is HDFS. HDFS is the “Secret Sauce” of Apache Hadoop components as users can dump huge datasets into HDFS and the data will sit there nicely until the user wants to leverage it for analysis. HDFS component creates several replicas of the data block to be distributed across different clusters for reliable and quick data access. HDFS comprises 3 important components-NameNode, DataNode and Secondary NameNode. HDFS operates on a Master-Slave architecture model where the NameNode acts as the master node for keeping a track of the storage cluster and the DataNode acts as a slave node summing up to the various systems within a Hadoop cluster.

HDFS Use Case-

Nokia deals with more than 500 terabytes of unstructured data and close to 100 terabytes of structured data. Nokia uses HDFS for storing all the structured and unstructured data sets as it allows processing of the stored data at a petabyte scale.

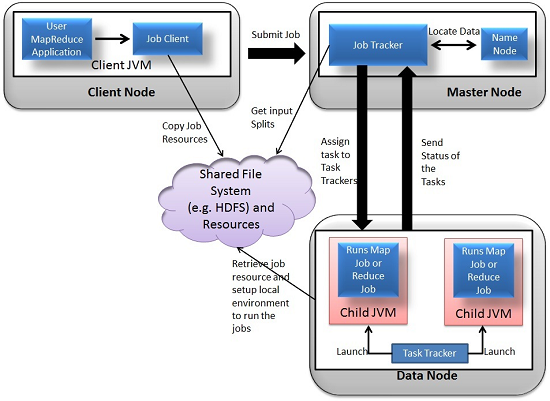

3) MapReduce- Distributed Data Processing Framework of Apache Hadoop

MapReduce is a Java-based system created by Google where the actual data from the HDFS store gets processed efficiently. MapReduce breaks down a big data processing job into smaller tasks. MapReduce is responsible for the analysing large datasets in parallel before reducing it to find the results. In the Hadoop ecosystem, Hadoop MapReduce is a framework based on YARN architecture. YARN based Hadoop architecture, supports parallel processing of huge data sets and MapReduce provides the framework for easily writing applications on thousands of nodes, considering fault and failure management.

The basic principle of operation behind MapReduce is that the “Map” job sends a query for processing to various nodes in a Hadoop cluster and the “Reduce” job collects all the results to output into a single value. Map Task in the Hadoop ecosystem takes input data and splits into independent chunks and output of this task will be the input for Reduce Task. In The same Hadoop ecosystem Reduce task combines Mapped data tuples into smaller set of tuples. Meanwhile, both input and output of tasks are stored in a file system. MapReduce takes care of scheduling jobs, monitoring jobs and re-executes the failed task.

MapReduce framework forms the compute node while the HDFS file system forms the data node. Typically in the Hadoop ecosystem architecture both data node and compute node are considered to be the same.

The delegation tasks of the MapReduce component are tackled by two daemons- Job Tracker and Task Tracker as shown in the image below –

MapReduce Use Case:

Skybox has developed an economical image satellite system for capturing videos and images from any location on earth. Skybox uses Hadoop to analyse the large volumes of image data downloaded from the satellites. The image processing algorithms of Skybox are written in C++. Busboy, a proprietary framework of Skybox makes use of built-in code from java based MapReduce framework.

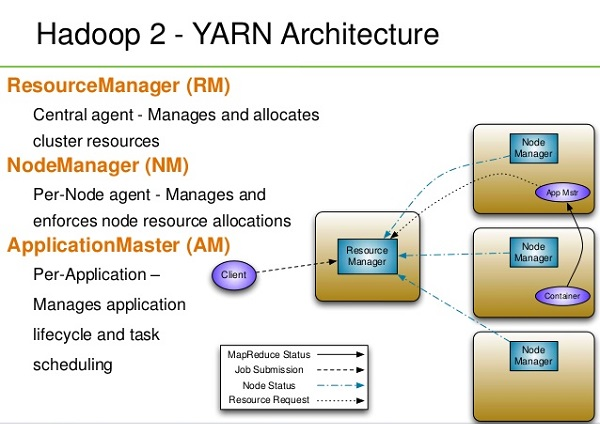

4)YARN

YARN forms an integral part of Hadoop 2.0.YARN is great enabler for dynamic resource utilization on Hadoop framework as users can run various Hadoop applications without having to bother about increasing workloads.

Key Benefits of Hadoop 2.0 YARN Component-

- It offers improved cluster utilization

- Highly scalable

- Beyond Java

- Novel programming models and services

- Agility

YARN Use Case:

Yahoo has close to 40,000 nodes running Apache Hadoop with 500,000 MapReduce jobs per day taking 230 compute years extra for processing every day. YARN at Yahoo helped them increase the load on the most heavily used Hadoop cluster to 125,000 jobs a day when compared to 80,000 jobs a day which is close to 50% increase.

The above listed core components of Apache Hadoop form the basic distributed Hadoop framework. There are several other Hadoop components that form an integral part of the Hadoop ecosystem with the intent of enhancing the power of Apache Hadoop in some way or the other like- providing better integration with databases, making Hadoop faster or developing novel features and functionalities. Here are some of the eminent Hadoop components used by enterprises extensively –

Data Access Components of Hadoop Ecosystem- Pig and Hive

- Pig-

Apache Pig is a convenient tools developed by Yahoo for analysing huge data sets efficiently and easily. It provides a high level data flow language Pig Latin that is optimized, extensible and easy to use. The most outstanding feature of Pig programs is that their structure is open to considerable parallelization making it easy for handling large data sets.

Pig Use Case-

The personal healthcare data of an individual is confidential and should not be exposed to others. This information should be masked to maintain confidentiality but the healthcare data is so huge that identifying and removing personal healthcare data is crucial. Apache Pig can be used under such circumstances to de-identify health information.

- Hive-

Hive developed by Facebook is a data warehouse built on top of Hadoop and provides a simple language known as HiveQL similar to SQL for querying, data summarization and analysis. Hive makes querying faster through indexing.

Hive Use Case-

Hive simplifies Hadoop at Facebook with the execution of 7500+ Hive jobs daily for Ad-hoc analysis, reporting and machine learning.

Data Integration Components of Hadoop Ecosystem- Sqoop and Flume

- Sqoop

Sqoop component is used for importing data from external sources into related Hadoop components like HDFS, HBase or Hive. It can also be used for exporting data from Hadoop o other external structured data stores. Sqoop parallelized data transfer, mitigates excessive loads, allows data imports, efficient data analysis and copies data quickly.

Sqoop Use Case-

Online Marketer Coupons.com uses Sqoop component of the Hadoop ecosystem to enable transmission of data between Hadoop and the IBM Netezza data warehouse and pipes backs the results into Hadoop using Sqoop.

- Flume-

Flume component is used to gather and aggregate large amounts of data. Apache Flume is used for collecting data from its origin and sending it back to the resting location (HDFS).Flume accomplishes this by outlining data flows that consist of 3 primary structures channels, sources and sinks. The processes that run the dataflow with flume are known as agents and the bits of data that flow via flume are known as events.

Flume Use Case –

Twitter source connects through the streaming API and continuously downloads the tweets (called as events). These tweets are converted into JSON format and sent to the downstream Flume sinks for further analysis of tweets and retweets to engage users on Twitter.

Data Storage Component of Hadoop Ecosystem –HBase

HBase –

HBase is a column-oriented database that uses HDFS for underlying storage of data. HBase supports random reads and also batch computations using MapReduce. With HBase NoSQL database enterprise can create large tables with millions of rows and columns on hardware machine. The best practice to use HBase is when there is a requirement for random ‘read or write’ access to big datasets.

HBase Use Case-

Facebook is one the largest users of HBase with its messaging platform built on top of HBase in 2010.HBase is also used by Facebook for streaming data analysis, internal monitoring system, Nearby Friends Feature, Search Indexing and scraping data for their internal data warehouses.

Monitoring, Management and Orchestration Components of Hadoop Ecosystem- Oozie and Zookeeper

- Oozie-

Oozie is a workflow scheduler where the workflows are expressed as Directed Acyclic Graphs. Oozie runs in a Java servlet container Tomcat and makes use of a database to store all the running workflow instances, their states ad variables along with the workflow definitions to manage Hadoop jobs (MapReduce, Sqoop, Pig and Hive).The workflows in Oozie are executed based on data and time dependencies.

Oozie Use Case:

The American video game publisher Riot Games uses Hadoop and the open source tool Oozie to understand the player experience.

- Zookeeper-

Zookeeper is the king of coordination and provides simple, fast, reliable and ordered operational services for a Hadoop cluster. Zookeeper is responsible for synchronization service, distributed configuration service and for providing a naming registry for distributed systems.

Zookeeper Use Case-

Found by Elastic uses Zookeeper comprehensively for resource allocation, leader election, high priority notifications and discovery. The entire service of Found built up of various systems that read and write to Zookeeper.

Several other common Hadoop ecosystem components include: Avro, Cassandra, Chukwa, Mahout, HCatalog, Ambari and Hama. By implementing Hadoop using one or more of the Hadoop ecosystem components, users can personalize their big data experience to meet the changing business requirements. The demand for big data analytics will make the elephant stay in the big data room for quite some time.

Apache AMBARI or Call it the Elephant Rider

The major drawback with Hadoop 1 was the lack of open source enterprise operations team console. As a result of this , the operations and admin teams were required to have complete knowledge of Hadoop semantics and other internals to be capable of creating and replicating hadoop clusters, resource allocation monitoring, and operational scripting. This big data hadoop component allows you to provision, manage and monitor Hadoop clusters A Hadoop component, Ambari is a RESTful API which provides easy to use web user interface for Hadoop management. Ambari provides step-by-step wizard for installing Hadoop ecosystem services. It is equipped with central management to start, stop and re-configure Hadoop services and it facilitates the metrics collection, alert framework, which can monitor the health status of the Hadoop cluster. Recent release of Ambari has added the service check for Apache spark Services and supports Spark 1.6.

- Regardless of the size of the Hadoop cluster, deploying and maintaining hosts is simplified with the use of Apache Ambari.

- Amabari monitors the health and status of a hadoop cluster to minute detailing for displaying the metrics on the web user interface.

Apache MAHOUT

Mahout is an important Hadoop component for machine learning, this provides implementation of various machine learning algorithms. This Hadoop component helps with considering user behavior in providing suggestions, categorizing the items to its respective group, classifying items based on the categorization and supporting in implementation group mining or itemset mining, to determine items which appear in group.

Apache Kafka

A distributed public-subscribe message developed by LinkedIn that is fast, durable and scalable.Just like other Public-Subscribe messaging systems ,feeds of messages are maintained in topics.

Apache Kafka Use Cases

- Spotify uses Kafka as a part of their log collection pipeline.

- Airbnb uses Kafka in its event pipeline and exception tracking.

- At FourSquare ,Kafka powers online-online and online-offline messaging.

- Kafka power’s MailChimp’s data pipeline.

Hence, Hadoop Ecosystem provides different components that make it so popular. Due to these Hadoop components, several Hadoop job roles are available now. I hope this Hadoop Ecosystem tutorial helps you a lot to understand the Hadoop family and their roles. If you find any query, so please share with us in the comment box.