- File formats in Hadoop Tutorial | A Concise Tutorial Just An Hour

- Controlling Hadoop Jobs Using Oozie Tutorial | The Complete Guide

- Apache Spark Streaming Tutorial | Best Guide For Beginners

- What is Elasticsearch | Tutorial for Beginners

- Amazon Kinesis : Process & Analyze Streaming Data | The Ultimate Student Guide

- Apache Camel Tutorial – EIP, Routes, Components | Ultimate Guide to Learn [BEST & NEW]

- Apache NiFi (Cloudera DataFlow) | Become an expert with Free Online Tutorial

- Kafka Tutorial : Learn Kafka Configuration

- Apache Sqoop Tutorial

- Spark And RDD Cheat Sheet Tutorial

- Apache Pig Tutorial

- Talend

- Cassandra Tutorial

- Kafka Tutorial

- HBase Tutorial

- Spark Java Tutorial

- ELK Stack Tutorial

- Netbeans Tutorial

- PySpark MLlib Tutorial

- Spark RDD Optimization Techniques Tutorial

- Apache Spark & Scala Tutorial

- Apache Impala Tutorial

- Apache Oozie: A Concise Tutorial Just An Hour | LearnoVita

- Apache Storm Advanced Concepts Tutorial

- Apache Storm Tutorial

- Hadoop Mapreduce tutorial

- Hive cheat sheet

- Spark Algorithm Tutorial

- Apache Spark Tutorial

- Apache Cassandra Data Model Tutorial

- Big Data Applications Tutorial

- Advanced Hive Concepts and Data File Partitioning Tutorial

- Hadoop Architecture Tutorial

- Big Data and Hadoop Ecosystem Tutorial

- Apache Mahout Tutorial

- Hadoop Tutorial

- BIG DATA Tutorial

- File formats in Hadoop Tutorial | A Concise Tutorial Just An Hour

- Controlling Hadoop Jobs Using Oozie Tutorial | The Complete Guide

- Apache Spark Streaming Tutorial | Best Guide For Beginners

- What is Elasticsearch | Tutorial for Beginners

- Amazon Kinesis : Process & Analyze Streaming Data | The Ultimate Student Guide

- Apache Camel Tutorial – EIP, Routes, Components | Ultimate Guide to Learn [BEST & NEW]

- Apache NiFi (Cloudera DataFlow) | Become an expert with Free Online Tutorial

- Kafka Tutorial : Learn Kafka Configuration

- Apache Sqoop Tutorial

- Spark And RDD Cheat Sheet Tutorial

- Apache Pig Tutorial

- Talend

- Cassandra Tutorial

- Kafka Tutorial

- HBase Tutorial

- Spark Java Tutorial

- ELK Stack Tutorial

- Netbeans Tutorial

- PySpark MLlib Tutorial

- Spark RDD Optimization Techniques Tutorial

- Apache Spark & Scala Tutorial

- Apache Impala Tutorial

- Apache Oozie: A Concise Tutorial Just An Hour | LearnoVita

- Apache Storm Advanced Concepts Tutorial

- Apache Storm Tutorial

- Hadoop Mapreduce tutorial

- Hive cheat sheet

- Spark Algorithm Tutorial

- Apache Spark Tutorial

- Apache Cassandra Data Model Tutorial

- Big Data Applications Tutorial

- Advanced Hive Concepts and Data File Partitioning Tutorial

- Hadoop Architecture Tutorial

- Big Data and Hadoop Ecosystem Tutorial

- Apache Mahout Tutorial

- Hadoop Tutorial

- BIG DATA Tutorial

HBase Tutorial

Last updated on 12th Oct 2020, Big Data, Blog, Tutorials

HBase is a data model that is similar to Google’s big table designed to provide quick random access to huge amounts of structured data. This tutorial provides an introduction to HBase, the procedures to set up HBase on Hadoop File Systems, and ways to interact with HBase shell. It also describes how to connect to HBase using java, and how to perform basic operations on HBase using java.

This tutorial should help professionals aspiring to make a career in Big Data Analytics using Hadoop Framework. Software professionals, analytics Professionals, and ETL developers are the key beneficiaries of this course.

Before you start proceeding with this tutorial, we assume that you are already aware of Hadoop’s architecture and APIs, have experience in writing basic applications using java, and have a working knowledge of any database.

Since 1970, RDBMS is the solution for data storage and maintenance related problems. After the advent of big data, companies realized the benefit of processing big data and started opting for solutions like Hadoop.

Hadoop uses distributed file system for storing big data, and MapReduce to process it. Hadoop excels in storing and processing of huge data of various formats such as arbitrary, semi-, or even unstructured.

Limitations of Hadoop

Hadoop can perform only batch processing, and data will be accessed only in a sequential manner. That means one has to search the entire dataset even for the simplest of jobs.

A huge dataset when processed results in another huge data set, which should also be processed sequentially. At this point, a new solution is needed to access any point of data in a single unit of time (random access).

Hadoop Random Access Databases

Applications such as HBase, Cassandra, couchDB, Dynamo, and MongoDB are some of the databases that store huge amounts of data and access the data in a random manner.

What is HBase?

HBase is a distributed column-oriented database built on top of the Hadoop file system. It is an open-source project and is horizontally scalable.

HBase is a data model that is similar to Google’s big table designed to provide quick random access to huge amounts of structured data. It leverages the fault tolerance provided by the Hadoop File System (HDFS).

It is a part of the Hadoop ecosystem that provides random real-time read/write access to data in the Hadoop File System.

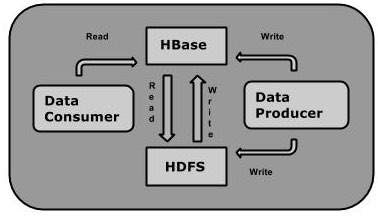

One can store the data in HDFS either directly or through HBase. Data consumer reads/accesses the data in HDFS randomly using HBase. HBase sits on top of the Hadoop File System and provides read and write access.

HBase and HDFS

| HDFS | HBase |

|---|---|

| HDFS is a distributed file system suitable for storing large files. | HBase is a database built on top of the HDFS. |

| HDFS does not support fast individual record lookups. | HBase provides fast lookups for larger tables. |

| It provides high latency batch processing; no concept of batch processing. | It provides low latency access to single rows from billions of records (Random access). |

| It provides only sequential access of data. | HBase internally uses Hash tables and provides random access, and it stores the data in indexed HDFS files for faster lookups. |

Storage Mechanism in HBase

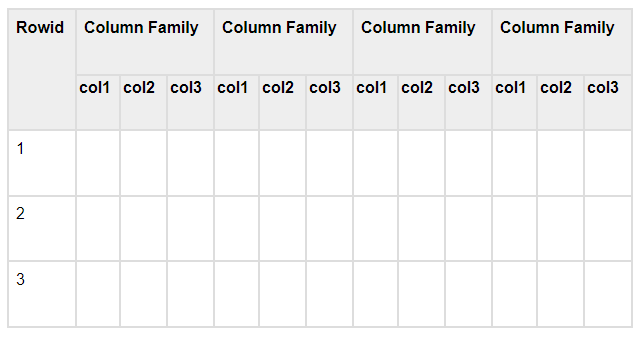

HBase is a column-oriented database and the tables in it are sorted by row. The table schema defines only column families, which are the key value pairs. A table have multiple column families and each column family can have any number of columns. Subsequent column values are stored contiguously on the disk. Each cell value of the table has a timestamp. In short, in an HBase:

- Table is a collection of rows.

- Row is a collection of column families.

- Column family is a collection of columns.

- Column is a collection of key value pairs.

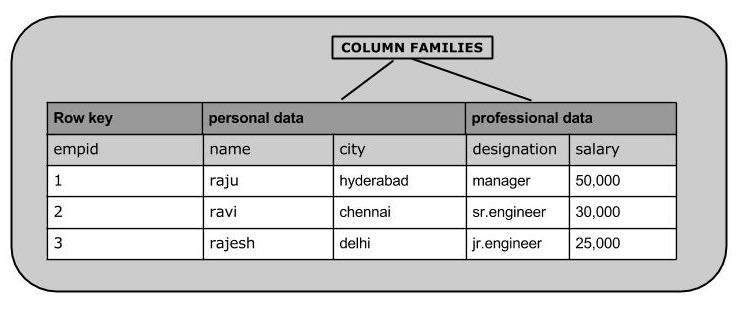

Given below is an example schema of table in HBase.

Column Oriented and Row Oriented

Column-oriented databases are those that store data tables as sections of columns of data, rather than as rows of data. Shortly, they will have column families.

| Row-Oriented Database | Column-Oriented Database |

|---|---|

| It is suitable for Online Transaction Process (OLTP). | It is suitable for Online Analytical Processing (OLAP). |

| Such databases are designed for small number of rows and columns. | Column-oriented databases are designed for huge tables. |

The following image shows column families in a column-oriented database:

HBase and RDBMS

| HBase | RDBMS |

|---|---|

| HBase is schema-less, it doesn’t have the concept of fixed columns schema; defines only column families. | An RDBMS is governed by its schema, which describes the whole structure of tables. |

| It is built for wide tables. HBase is horizontally scalable. | It is thin and built for small tables. Hard to scale. |

| No transactions are there in HBase. | RDBMS is transactional. |

| It has de-normalized data. | It will have normalized data. |

| It is good for semi-structured as well as structured data. | It is good for structured data. |

Features of HBase

- 1. HBase is linearly scalable.

- 2. It has automatic failure support.

- 3. It provides consistent read and writes.

- 4. It integrates with Hadoop, both as a source and a destination.

- 5. It has easy java API for client.

- 6. It provides data replication across clusters.

Where to Use HBase

- 1. Apache HBase is used to have random, real-time read/write access to Big Data.

- 2. It hosts very large tables on top of clusters of commodity hardware.

- 3. Apache HBase is a non-relational database modeled after Google’s Bigtable. Bigtable acts up on Google File System, likewise Apache HBase works on top of Hadoop and HDFS.

Applications of HBase

- It is used whenever there is a need to write heavy applications.

- HBase is used whenever we need to provide fast random access to available data.

- Companies such as Facebook, Twitter, Yahoo, and Adobe use HBase internally.

HBase History

| Year | Event |

|---|---|

| Nov 2006 | Google released the paper on BigTable. |

| Feb 2007 | Initial HBase prototype was created as a Hadoop contribution. |

| Oct 2007 | The first usable HBase along with Hadoop 0.15.0 was released. |

| Jan 2008 | HBase became the sub project of Hadoop. |

| Oct 2008 | HBase 0.18.1 was released. |

| Jan 2009 | HBase 0.19.0 was released. |

| Sept 2009 | HBase 0.20.0 was released. |

| May 2010 | HBase became Apache top-level project. |

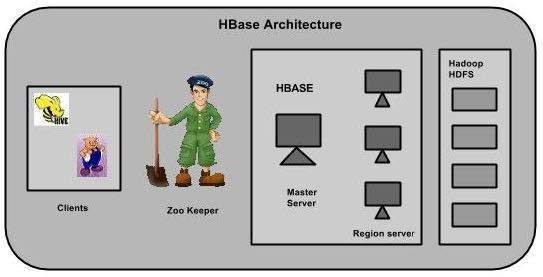

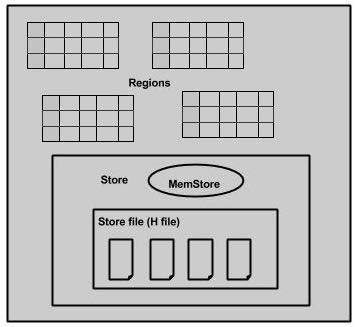

In HBase, tables are split into regions and are served by the region servers. Regions are vertically divided by column families into “Stores”. Stores are saved as files in HDFS. Shown below is the architecture of HBase.

Note: The term ‘store’ is used for regions to explain the storage structure.

HBase has three major components: the client library, a master server, and region servers. Region servers can be added or removed as per requirement.

Subscribe For Free Demo

Error: Contact form not found.

MasterServer

The master server –

- Assigns regions to the region servers and takes the help of Apache ZooKeeper for this task.

- Handles load balancing of the regions across region servers. It unloads the busy servers and shifts the regions to less occupied servers.

- Maintains the state of the cluster by negotiating the load balancing.

- Is responsible for schema changes and other metadata operations such as creation of tables and column families.

Regions

Regions are nothing but tables that are split up and spread across the region servers.

Region server

The region servers have regions that –

- Communicate with the client and handle data-related operations.

- Handle read and write requests for all the regions under it.

- Decide the size of the region by following the region size thresholds.

When we take a deeper look into the region server, it contain regions and stores as shown below:

The store contains memory store and HFiles. Memstore is just like a cache memory. Anything that is entered into the HBase is stored here initially. Later, the data is transferred and saved in Hfiles as blocks and the memstore is flushed.

Zookeeper

- Zookeeper is an open-source project that provides services like maintaining configuration information, naming, providing distributed synchronization, etc.

- Zookeeper has ephemeral nodes representing different region servers. Master servers use these nodes to discover available servers.

- In addition to availability, the nodes are also used to track server failures or network partitions.

- Clients communicate with region servers via zookeeper.

- In pseudo and standalone modes, HBase itself will take care of zookeeper.

This chapter explains how HBase is installed and initially configured. Java and Hadoop are required to proceed with HBase, so you have to download and install java and Hadoop in your system.

Pre-Installation Setup

Before installing Hadoop into Linux environment, we need to set up Linux using ssh (Secure Shell). Follow the steps given below for setting up the Linux environment.

Creating a User

First of all, it is recommended to create a separate user for Hadoop to isolate the Hadoop file system from the Unix file system. Follow the steps given below to create a user.

- 1. Open the root using the command “su”.

- 2. Create a user from the root account using the command “useradd username”.

- 3. Now you can open an existing user account using the command “su username”.

Open the Linux terminal and type the following commands to create a user.

- $ su

- password:

- # useradd hadoop

- # passwd hadoop

- New passwd:

- Retype new passwd

SSH Setup and Key Generation

SSH setup is required to perform different operations on the cluster such as start, stop, and distributed daemon shell operations. To authenticate different users of Hadoop, it is required to provide public/private key pair for a Hadoop user and share it with different users.

The following commands are used to generate a key value pair using SSH. Copy the public keys form id_rsa.pub to authorized_keys, and provide owner, read and write permissions to authorized_keys file respectively.

- $ ssh-keygen -t rsa

- $ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

- $ chmod 0600 ~/.ssh/authorized_keys

Verify ssh

- ssh localhost

Installing Java

Java is the main prerequisite for Hadoop and HBase. First of all, you should verify the existence of java in your system using “java -version”. The syntax of java version command is given below.

- $ java -version

If everything works fine, it will give you the following output.

java version “1.7.0_71”

Java(TM) SE Runtime Environment (build 1.7.0_71-b13)

Java HotSpot(TM) Client VM (build 25.0-b02, mixed mode)

If java is not installed in your system, then follow the steps given below for installing java.

Step 1

Download java (JDK <latest version> – X64.tar.gz) by visiting the following link Oracle Java.

Then jdk-7u71-linux-x64.tar.gz will be downloaded into your system.

Step 2

Generally you will find the downloaded java file in Downloads folder. Verify it and extract the jdk-7u71-linux-x64.gz file using the following commands.

- $ cd Downloads/

- $ ls

- jdk-7u71-linux-x64.gz

- $ tar zxf jdk-7u71-linux-x64.gz

- $ ls

- jdk1.7.0_71 jdk-7u71-linux-x64.gz

Step 3

To make java available to all the users, you have to move it to the location “/usr/local/”. Open root and type the following commands.

- $ su

- password:

- # mv jdk1.7.0_71 /usr/local/

- # exit

Step 4

For setting up PATH and JAVA_HOME variables, add the following commands to ~/.bashrc file.

- export JAVA_HOME=/usr/local/jdk1.7.0_71

- export PATH= $PATH:$JAVA_HOME/bin

Now apply all the changes into the current running system.

- $ source ~/.bashrc

Step 5

Use the following commands to configure java alternatives:

- # alternatives –install /usr/bin/java java usr/local/java/bin/java 2

- # alternatives –install /usr/bin/javac javac usr/local/java/bin/javac 2

- # alternatives –install /usr/bin/jar jar usr/local/java/bin/jar 2

- # alternatives –set java usr/local/java/bin/java

- # alternatives –set javac usr/local/java/bin/javac

- # alternatives –set jar usr/local/java/bin/jar

Now verify the java -version command from the terminal as explained above.

Downloading Hadoop

After installing java, you have to install Hadoop. First of all, verify the existence of Hadoop using “ Hadoop version ” command as shown below.

- hadoop version

If everything works fine, it will give you the following output.

Hadoop 2.6.0

Compiled by jenkins on 2014-11-13T21:10Z

Compiled with protoc 2.5.0

From source with checksum 18e43357c8f927c0695f1e9522859d6a

This command was run using

/home/hadoop/hadoop/share/hadoop/common/hadoop-common-2.6.0.jar

If your system is unable to locate Hadoop, then download Hadoop in your system. Follow the commands given below to do so.

Download and extract hadoop-2.6.0 from Apache Software Foundation using the following commands

- $ su

- password:

- # cd /usr/local

- # wget http://mirrors.advancedhosters.com/apache/hadoop/common/hadoop-

- 2.6.0/hadoop-2.6.0-src.tar.gz

- # tar xzf hadoop-2.6.0-src.tar.gz

- # mv hadoop-2.6.0/* hadoop/

- # exit

Installing Hadoop

Install Hadoop in any of the required mode. Here, we are demonstrating HBase functionalities in pseudo distributed mode, therefore install Hadoop in pseudo distributed mode.

The following steps are used for installing Hadoop 2.4.1.

Step 1 – Setting up Hadoop

You can set Hadoop environment variables by appending the following commands to ~/.bashrc file.

- export HADOOP_HOME=/usr/local/hadoop

- export HADOOP_MAPRED_HOME=$HADOOP_HOME

- export HADOOP_COMMON_HOME=$HADOOP_HOME

- export HADOOP_HDFS_HOME=$HADOOP_HOME

- export YARN_HOME=$HADOOP_HOME

- export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

- export PATH=$PATH:$HADOOP_HOME/sbin:$HADOOP_HOME/bin

- export HADOOP_INSTALL=$HADOOP_HOME

Now apply all the changes into the current running system.

- $ source ~/.bashrc

Step 2 – Hadoop Configuration

You can find all the Hadoop configuration files in the location “$HADOOP_HOME/etc/hadoop”. You need to make changes in those configuration files according to your Hadoop infrastructure.

- $ cd $HADOOP_HOME/etc/hadoop

In order to develop Hadoop programs in java, you have to reset the java environment variable in hadoop-env.sh file by replacing JAVA_HOME value with the location of java in your system.

- export JAVA_HOME=/usr/local/jdk1.7.0_71

You will have to edit the following files to configure Hadoop.

core-site.xml

The core-site.xml file contains information such as the port number used for Hadoop instance, memory allocated for file system, memory limit for storing data, and the size of Read/Write buffers.

Open core-site.xml and add the following properties in between the <configuration> and </configuration> tags.

- <configuration>

- <property>

- <name>fs.default.name</name>

- <value>hdfs://localhost:9000</value>

- </property>

- </configuration>

hdfs-site.xml

The hdfs-site.xml file contains information such as the value of replication data, namenode path, and datanode path of your local file systems, where you want to store the Hadoop infrastructure.

Let us assume the following data.

dfs.replication (data replication value) = 1

(In the below given path /hadoop/ is the user name.

hadoopinfra/hdfs/namenode is the directory created by hdfs file system.)

namenode path = //home/hadoop/hadoopinfra/hdfs/namenode

(hadoopinfra/hdfs/datanode is the directory created by hdfs file system.)

datanode path = //home/hadoop/hadoopinfra/hdfs/datanode

Open this file and add the following properties in between the <configuration>, </configuration> tags.

- <configuration>

- <property>

- <name>dfs.replication</name >

- <value>1</value>

- </property>

- <property>

- <name>dfs.name.dir</name>

- <value>file:///home/hadoop/hadoopinfra/hdfs/namenode</value>

- </property>

- <property>

- <name>dfs.data.dir</name>

- <value>file:///home/hadoop/hadoopinfra/hdfs/datanode</value>

- </property>

- </configuration>

Note: In the above file, all the property values are user-defined and you can make changes according to your Hadoop infrastructure.

yarn-site.xml

This file is used to configure yarn into Hadoop. Open the yarn-site.xml file and add the following property in between the <configuration$gt;, </configuration$gt; tags in this file.

- <configuration>

- <property>

- <name>yarn.nodemanager.aux-services</name>

- <value>mapreduce_shuffle</value>

- </property>

- </configuration>

mapred-site.xml

This file is used to specify which MapReduce framework we are using. By default, Hadoop contains a template of yarn-site.xml. First of all, it is required to copy the file from mapred-site.xml.template to mapred-site.xml file using the following command.

- $ cp mapred-site.xml.template mapred-site.xml

Open mapred-site.xml file and add the following properties in between the <configuration> and </configuration> tags.

- <configuration>

- <property>

- <name>mapreduce.framework.name</name>

- <value>yarn</value>

- </property>

- </configuration>

Verifying Hadoop Installation

The following steps are used to verify the Hadoop installation.

Step 1 – Name Node Setup

Set up the namenode using the command “hdfs namenode -format” as follows.

- $ cd ~

- $ hdfs namenode -format

The expected result is as follows.

10/24/14 21:30:55 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = localhost/192.168.1.11

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.4.1

…

…

10/24/14 21:30:56 INFO common.Storage: Storage directory

/home/hadoop/hadoopinfra/hdfs/namenode has been successfully formatted.

10/24/14 21:30:56 INFO namenode.NNStorageRetentionManager: Going to

retain 1 images with txid >= 0

10/24/14 21:30:56 INFO util.ExitUtil: Exiting with status 0

10/24/14 21:30:56 INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at localhost/192.168.1.11

************************************************************/

Step 2 – Verifying Hadoop dfs

The following command is used to start dfs. Executing this command will start your Hadoop file system.

- $ start-dfs.sh

The expected output is as follows.

10/24/14 21:37:56

Starting namenodes on [localhost]

localhost: starting namenode, logging to /home/hadoop/hadoop-

2.4.1/logs/hadoop-hadoop-namenode-localhost.out

localhost: starting datanode, logging to /home/hadoop/hadoop-

2.4.1/logs/hadoop-hadoop-datanode-localhost.out

Starting secondary namenodes [0.0.0.0]

Step 3 – Verifying Yarn Script

The following command is used to start the yarn script. Executing this command will start your yarn daemons.

- $ start-yarn.sh

The expected output is as follows.

starting yarn daemons

starting resourcemanager, logging to /home/hadoop/hadoop-

2.4.1/logs/yarn-hadoop-resourcemanager-localhost.out

localhost: starting nodemanager, logging to /home/hadoop/hadoop-

2.4.1/logs/yarn-hadoop-nodemanager-localhost.out

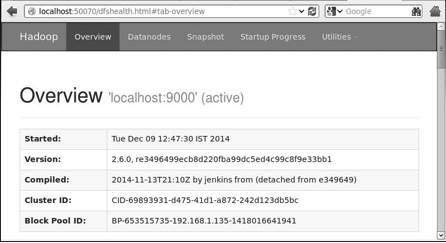

Step 4 – Accessing Hadoop on Browser

The default port number to access Hadoop is 50070. Use the following url to get Hadoop services on your browser.

Step 5 – Verify all Applications of Cluster

The default port number to access all the applications of cluster is 8088. Use the following url to visit this service.

Installing HBase

We can install HBase in any of the three modes: Standalone mode, Pseudo Distributed mode, and Fully Distributed mode.

Installing HBase in Standalone Mode

Download the latest stable version of HBase form http://www.interior-dsgn.com/apache/hbase/stable/ using “wget” command, and extract it using the tar “zxvf” command. See the following command.

- $wget http://www.interior-dsgn.com/apache/hbase/stable/hbase-0.98.8-

- hadoop2-bin.tar.gz

- $tar -zxvf hbase-0.98.8-hadoop2-bin.tar.gz

- Shift to super user mode and move the HBase folder to /usr/local as shown below.

- $su

- $password: enter your password here

- mv hbase-0.99.1/* Hbase/

Configuring HBase in Standalone Mode

Before proceeding with HBase, you have to edit the following files and configure HBase.

hbase-env.sh

Set the java Home for HBase and open hbase-env.sh file from the conf folder. Edit JAVA_HOME environment variable and change the existing path to your current JAVA_HOME variable as shown below.

- cd /usr/local/Hbase/conf

- gedit hbase-env.sh

This will open the env.sh file of HBase. Now replace the existing JAVA_HOME value with your current value as shown below.

- export JAVA_HOME=/usr/lib/jvm/java-1.7.0

hbase-site.xml

This is the main configuration file of HBase. Set the data directory to an appropriate location by opening the HBase home folder in /usr/local/HBase. Inside the conf folder, you will find several files, open the hbase-site.xml file as shown below.

- #cd /usr/local/HBase/

- #cd conf

- # gedit hbase-site.xml

Inside the hbase-site.xml file, you will find the <configuration> and </configuration> tags. Within them, set the HBase directory under the property key with the name “hbase.rootdir” as shown below.

- <configuration>

- //Here you have to set the path where you want HBase to store its files.

- <property>

- <name>hbase.rootdir</name>

- <value>file:/home/hadoop/HBase/HFiles</value>

- </property>

- //Here you have to set the path where you want HBase to store its built in zookeeper files.

- <property>

- <name>hbase.zookeeper.property.dataDir</name>

- <value>/home/hadoop/zookeeper</value>

- </property>

- </configuration>

With this, the HBase installation and configuration part is successfully complete. We can start HBase by using start-hbase.sh script provided in the bin folder of HBase. For that, open HBase Home Folder and run HBase start script as shown below.

- $cd /usr/local/HBase/bin

- $./start-hbase.sh

If everything goes well, when you try to run HBase start script, it will prompt you a message saying that HBase has started.

starting master, logging to /usr/local/HBase/bin/../logs/hbase-tpmaster-localhost.localdomain.out

Installing HBase in Pseudo-Distributed Mode

Let us now check how HBase is installed in pseudo-distributed mode.

Configuring HBase

Before proceeding with HBase, configure Hadoop and HDFS on your local system or on a remote system and make sure they are running. Stop HBase if it is running.

hbase-site.xml

Edit hbase-site.xml file to add the following properties.

- <property>

- <name>hbase.cluster.distributed</name>

- <value>true</value>

- </property>

It will mention in which mode HBase should be run. In the same file from the local file system, change the hbase.rootdir, your HDFS instance address, using the hdfs://// URI syntax. We are running HDFS on the localhost at port 8030.

- <property>

- <name>hbase.rootdir</name>

- <value>hdfs://localhost:8030/hbase</value>

- </property>

Starting HBase

After configuration is over, browse to HBase home folder and start HBase using the following command.

- $cd /usr/local/HBase

- $bin/start-hbase.sh

Note: Before starting HBase, make sure Hadoop is running.

Checking the HBase Directory in HDFS

HBase creates its directory in HDFS. To see the created directory, browse to Hadoop bin and type the following command.

- $ ./bin/hadoop fs -ls /hbase

If everything goes well, it will give you the following output.

Found 7 items

drwxr-xr-x – hbase users 0 2014-06-25 18:58 /hbase/.tmp

drwxr-xr-x – hbase users 0 2014-06-25 21:49 /hbase/WALs

drwxr-xr-x – hbase users 0 2014-06-25 18:48 /hbase/corrupt

drwxr-xr-x – hbase users 0 2014-06-25 18:58 /hbase/data

-rw-r–r– 3 hbase users 42 2014-06-25 18:41 /hbase/hbase.id

-rw-r–r– 3 hbase users 7 2014-06-25 18:41 /hbase/hbase.version

drwxr-xr-x – hbase users 0 2014-06-25 21:49 /hbase/oldWALs

Starting and Stopping a Master

Using the “local-master-backup.sh” you can start up to 10 servers. Open the home folder of HBase, master and execute the following command to start it.

- $ ./bin/local-master-backup.sh 2 4

To kill a backup master, you need its process id, which will be stored in a file named “/tmp/hbase-USER-X-master.pid.” you can kill the backup master using the following command.

- $ cat /tmp/hbase-user-1-master.pid |xargs kill -9

Starting and Stopping RegionServers

You can run multiple region servers from a single system using the following command.

- $ .bin/local-regionservers.sh start 2 3

To stop a region server, use the following command.

- $ .bin/local-regionservers.sh stop 3

Starting HBaseShell

After Installing HBase successfully, you can start HBase Shell. Below given are the sequence of steps that are to be followed to start the HBase shell. Open the terminal, and login as super user.

Start Hadoop File System

Browse through Hadoop home sbin folder and start Hadoop file system as shown below.

- $cd $HADOOP_HOME/sbin

- $start-all.sh

Start HBase

Browse through the HBase root directory bin folder and start HBase.

- $cd /usr/local/HBase

- $./bin/start-hbase.sh

Start HBase Master Server

This will be the same directory. Start it as shown below.

- $./bin/local-master-backup.sh start 2 (number signifies specific

- server.)

Start Region

Start the region server as shown below.

- $./bin/./local-regionservers.sh start 3

Start HBase Shell

You can start HBase shell using the following command.

- $cd bin

- $./hbase shell

This will give you the HBase Shell Prompt as shown below.

2014-12-09 14:24:27,526 INFO [main] Configuration.deprecation:

hadoop.native.lib is deprecated. Instead, use io.native.lib.available

HBase Shell; enter ‘help<RETURN>’ for list of supported commands.

Type “exit<RETURN>” to leave the HBase Shell

Version 0.98.8-hadoop2, r6cfc8d064754251365e070a10a82eb169956d5fe, Fri

Nov 14 18:26:29 PST 2014

hbase(main):001:0>

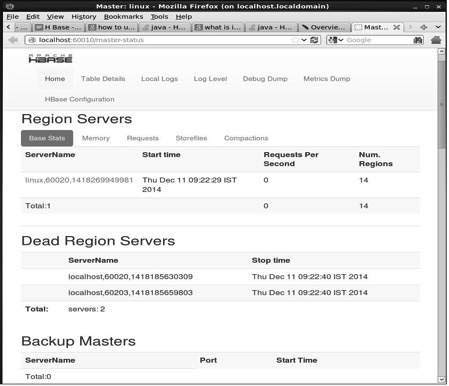

HBase Web Interface

To access the web interface of HBase, type the following url in the browser.

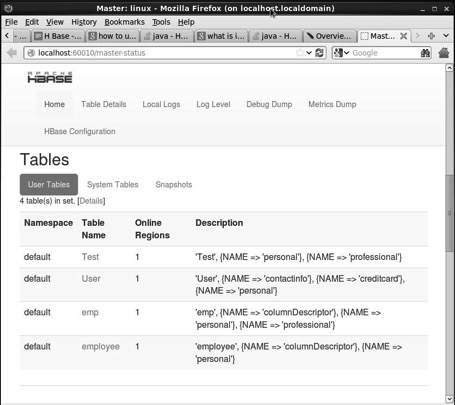

This interface lists your currently running Region servers, backup masters and HBase tables.

HBase Region servers and Backup Masters

HBase Tables

Setting Java Environment

We can also communicate with HBase using Java libraries, but before accessing HBase using Java API you need to set classpath for those libraries.

Setting the Classpath

Before proceeding with programming, set the classpath to HBase libraries in .bashrc file. Open .bashrc in any of the editors as shown below.

- $ gedit ~/.bashrc

- Set classpath for HBase libraries (lib folder in HBase) in it as shown below.

- export CLASSPATH = $CLASSPATH://home/hadoop/hbase/lib/*

This is to prevent the “class not found” exception while accessing the HBase using java API.