- File formats in Hadoop Tutorial | A Concise Tutorial Just An Hour

- Controlling Hadoop Jobs Using Oozie Tutorial | The Complete Guide

- Apache Spark Streaming Tutorial | Best Guide For Beginners

- What is Elasticsearch | Tutorial for Beginners

- Amazon Kinesis : Process & Analyze Streaming Data | The Ultimate Student Guide

- Apache Camel Tutorial – EIP, Routes, Components | Ultimate Guide to Learn [BEST & NEW]

- Apache NiFi (Cloudera DataFlow) | Become an expert with Free Online Tutorial

- Kafka Tutorial : Learn Kafka Configuration

- Apache Sqoop Tutorial

- Spark And RDD Cheat Sheet Tutorial

- Apache Pig Tutorial

- Talend

- Cassandra Tutorial

- Kafka Tutorial

- HBase Tutorial

- Spark Java Tutorial

- ELK Stack Tutorial

- Netbeans Tutorial

- PySpark MLlib Tutorial

- Spark RDD Optimization Techniques Tutorial

- Apache Spark & Scala Tutorial

- Apache Impala Tutorial

- Apache Oozie: A Concise Tutorial Just An Hour | LearnoVita

- Apache Storm Advanced Concepts Tutorial

- Apache Storm Tutorial

- Hadoop Mapreduce tutorial

- Hive cheat sheet

- Spark Algorithm Tutorial

- Apache Spark Tutorial

- Apache Cassandra Data Model Tutorial

- Big Data Applications Tutorial

- Advanced Hive Concepts and Data File Partitioning Tutorial

- Hadoop Architecture Tutorial

- Big Data and Hadoop Ecosystem Tutorial

- Apache Mahout Tutorial

- Hadoop Tutorial

- BIG DATA Tutorial

- File formats in Hadoop Tutorial | A Concise Tutorial Just An Hour

- Controlling Hadoop Jobs Using Oozie Tutorial | The Complete Guide

- Apache Spark Streaming Tutorial | Best Guide For Beginners

- What is Elasticsearch | Tutorial for Beginners

- Amazon Kinesis : Process & Analyze Streaming Data | The Ultimate Student Guide

- Apache Camel Tutorial – EIP, Routes, Components | Ultimate Guide to Learn [BEST & NEW]

- Apache NiFi (Cloudera DataFlow) | Become an expert with Free Online Tutorial

- Kafka Tutorial : Learn Kafka Configuration

- Apache Sqoop Tutorial

- Spark And RDD Cheat Sheet Tutorial

- Apache Pig Tutorial

- Talend

- Cassandra Tutorial

- Kafka Tutorial

- HBase Tutorial

- Spark Java Tutorial

- ELK Stack Tutorial

- Netbeans Tutorial

- PySpark MLlib Tutorial

- Spark RDD Optimization Techniques Tutorial

- Apache Spark & Scala Tutorial

- Apache Impala Tutorial

- Apache Oozie: A Concise Tutorial Just An Hour | LearnoVita

- Apache Storm Advanced Concepts Tutorial

- Apache Storm Tutorial

- Hadoop Mapreduce tutorial

- Hive cheat sheet

- Spark Algorithm Tutorial

- Apache Spark Tutorial

- Apache Cassandra Data Model Tutorial

- Big Data Applications Tutorial

- Advanced Hive Concepts and Data File Partitioning Tutorial

- Hadoop Architecture Tutorial

- Big Data and Hadoop Ecosystem Tutorial

- Apache Mahout Tutorial

- Hadoop Tutorial

- BIG DATA Tutorial

BIG DATA Tutorial

Last updated on 18th Sep 2020, Big Data, Blog, Tutorials

In this digital world with technological advancements, we exchange large amounts of data daily like in Terabytes or Petabyte.

If we are exchanging that amount of data daily then we need to maintain it as well and store it somewhere. The solution to handle the large volumes of data with high velocity and different variety is Big Data.

Big Data

- It is a set of extremely large data so complex and unorganized that it defies the common and easy data management methods that were designed and used up until this rise in data.

- The word Huge is not enough to explain BigData, certain characteristics classify the data into BigData.

Subscribe For Free Demo

Error: Contact form not found.

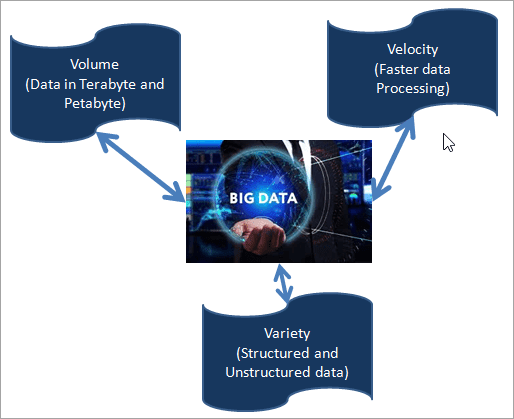

Characteristics of BigData

We have three main characteristics of BigData, and if any data satisfies these characteristics then it will be treated as BigData. It is the combination of the three V’s mentioned below:

- Volume

- Velocity

- Variety

Volume: The data should be of huge volume. Big Data has the solution to maintain a large amount of data which is in Terabyte or Petabyte. We can perform CRUD (Create, Read, Update and Delete) operations on BigData easily and effectively.

Velocity: It is responsible for faster access to data. For Example , nowadays social media needs a fast exchange of data within a fraction of time and BigData is the best solution for it. Hence, velocity is another characteristic and it is the processing speed of data.

Variety: In social media, we are dealing with unstructured data like audio or video recordings, images, etc. Also, various sectors like the banking domain need structured and semi-structured data. BigData is the solution to maintain both the types of data in one place.

Variety means different types of data like Structured / Unstructured Data coming from multiple sources.

Structured Data: The Data which has proper structure or the one that can be easily stored in a tabular form in any Relational DataBases like Oracle, SQL Server or MySQL is known as Structured Data. We can process or analyze it easily and efficiently.

Semi-Structured Data: Semi-Structured Data is the data that is not fully formatted. It is not stored in data tables or any database. But still, we can easily ready it and process it as this data contains Tags or comma-separated-values, etc. Examples of semi-structured data are XML files, CSV files, etc.

Unstructured Data: Unstructured Data is the data that does not have any structure. It can be in any form, there is no pre-defined data model. We can’t store it in traditional databases. It is complex to search and process it.

Also, the volume of Unstructured Data is very high. Examples of Unstructured Data is email body, Audio, Video, Images, Achieved documents, etc.

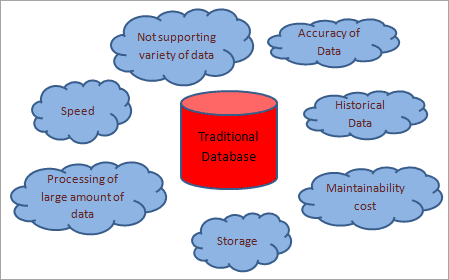

Challenges Of Traditional Databases

- The Traditional database does not support a variety of data i.e. it is not able to handle Unstructured and Semi-structured data.

- A Traditional database is slow while dealing with a large amount of data.

- In Traditional databases, processing or analysis of a large amount of data is very difficult.

- A Traditional database is capable of storing data that is in terabyte or petabyte.

- A Traditional database cannot handle Historical Data and Reports.

- After a certain amount of time data clean-up of the database is necessary.

- The cost to maintain a large amount of data is very high with a traditional database.

- Data accuracy is less in the traditional database as full historical data is not maintained in it.

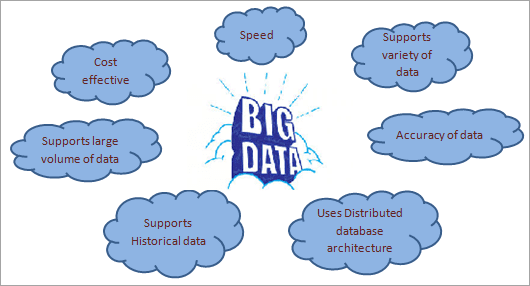

Big Data Benefits Over Traditional Database

- Big Data is responsible to handle, manage and process different types of data like Structured, Semi-structured and Unstructured.

- It is cost-effective in terms of maintaining a large amount of data. It works on a distributed database system.

- We can save large amounts of data for a long time using BigData techniques. So it is easy to handle historical data and generate accurate reports.

- Data processing speed is very fast and thus social media is using Big Data techniques.

- Data Accuracy is a big advantage of Big Data.

- It allows users to make efficient decisions for their business based on current and historical data.

- Error Handling, Versio

Challenges And Risks In BigData

Challenges:

- 1.One of the major challenges in Big Data is to manage large amounts of data. Nowadays data comes to a system from various sources with variety. So it’s a very big challenge for the companies to manage it properly. Examples: to generate a report which contains the last 20 years of data, it requires to save and maintain the last 20 years of data of a system. To provide an accurate report, it is necessary to put only the relevant data into the system. It should not contain irrelevant or unnecessary data, otherwise maintaining that amount of data will be a big challenge for the companies.

- 2.Another challenge with this technology is the synchronization of various types of data. As we all know Big Data supports Structured, Unstructured and Semi-structured data coming from different sources, synchronizing it and getting the consistency of data is very difficult.

- 3.The next challenge that companies are facing is the gap of experts who can help and implement the issues they are facing in the system. There is a big gap in talent in this field.

- 4.Handling the compliance aspect is expensive.

- 5.Data collection, aggregation, storage, analysis and reporting of BigData has a huge cost. The organization should be able to manage all these costs.

Risks:

- 1.It can handle a variety of data but if companies cannot understand requirements properly and control the source of data then it will provide flawed results. As a result, it will need a lot of time and money to investigate and correct the results.

- 2.Data security is another risk with BigData. With a high volume of data, there are higher chances that someone will steal it. Data hackers may steal and sell important information (including historical data) of the company.

- 3.Also, Data Privacy is another risk for BigData. If we want to secure the personal and sensitive data from hackers then it should be protected and must pass all the privacy policies.

Big Data Technologies

Following are the technologies that can be used to manage Big Data:

- 1.Apache Hadoop

- 2.Microsoft HDInsight

- 3.No SQL

- 4.Hive

- 5.Sqoop

- 6.BigData in Excel

- 1) Apache Hadoop

- 2) Lumify

- 3) Apache Storm

- 4) Apache Samoa

- 5) Elasticsearch

- 6) MongoDB

- 7) HPCC System BigData

- 1.Banking

- 2.Media and Entertainment

- 3.Healthcare Providers

- 4.Insurance

- 5.Education

- 6.Retail

- 7.Manufacturing

- 8.Government

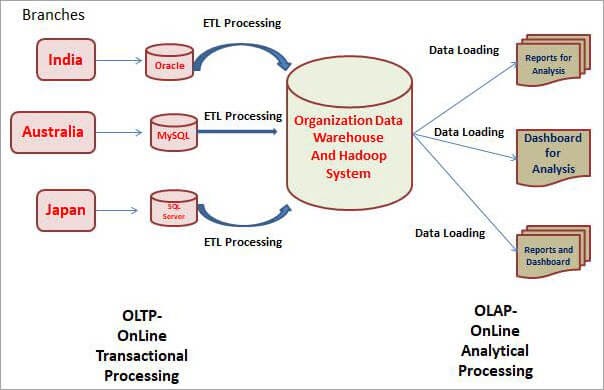

- Data Warehouse is a basic concept that we need to understand before discussing Hadoop or BigData Testing.

- Data Warehouse is a kind of database that contains all the data pulled from multiple sources or multiple database types through the “ETL” (which is the Extract, Transform and Load) process. Once the data is ready in the Data Warehouse, we can use it for analytical purposes.

- So for analysis, we can generate reports from the data available in the Data Warehouse. Multiple charts and reports can be generated using Business Intelligence Tools.

- We require Data Warehouse for analytical purposes to grow the business and make appropriate decisions for the organizations

- Three things are happening in this process, first is we have pulled the data from multiple sources and put it in a single location that is Data Warehouse.

- Here we use the “ETL” process, thus while loading the data from multiple sources to one place, we will apply it in Transformation roots and then we can use various kinds of ETL tools here.

- Once the data is ready into the Data Warehouse, we can generate various reports to analyze the business data by using Business Intelligence (BI) Tools or we also call them Reporting Tools. The tools like Tableau or Cognos can be used for generating the Reports and DashBoards for analyzing the data for business.

- Databases that are maintained locally and used for transactional purposes are called OLTP i.e. Online Transaction Processing. The day to day transactions will be stored here and updated immediately and that’s why we called them the OLTP System.

- Here we use Traditional Databases, we have multiple tables and there are relationships, thus everything is systematically planned as per the database. We are not using this data for analytical purposes. Here, we can use classical RDBMS databases like Oracle, MySQL, SQL Server, etc.

- When we come to the Data Warehouse part, we use Teradata or Hadoop Systems, which are also a kind of database but the data in a DataWarehouse is usually utilized for analytical purposes and is called OLAP or Online Analytical Processing.

- Here, the data can be updated on a quarterly, half-yearly or yearly basis. Sometimes the data is updated “Offerly” as well, where Offerly means the data is updated and fetched for analysis per customer requirements.

- Also, the data for analysis is not updated daily because we will get the data from multiple sources, on a scheduled basis and we can perform this ETL task. This is how the Online Analytical Processing System works.

Tools To Use Big Data Concepts

Enlisted below are the open-source tools that can help to use Big Data concepts:Applications of Big

Following are the domains where it is used:

BigData And Data Warehouse

OLTP

OLAP

Where does BigData come into the picture?

BigData is the data that is beyond the storage and processing capacity of conventional databases and it’s in the Structured and Unstructured format so it cannot be handled by local RDBMS systems.

This kind of data will be generated in TeraBytes (TB) or PetaBytes (PB) or beyond and it is rapidly increasing nowadays. There are multiple sources to get this kind of data such as Facebook, WhatsApp (which are related to Social Networking); Amazon, Flipkart related to E-Commerce; Gmail, Yahoo, Rediff related to Emails and Google and other search engines. We also get bigdata from mobiles like SMS Data, Call Recording, Call Logs, etc.

Conclusion

Big data is the solution to handle large amounts of data efficiently and securely. It is responsible to maintain historical data as well. There are many advantages of this technology which is why every company wants to switch to the Big data